Timothy Gowers's Blog

March 20, 2026

Group and semigroup puzzles and a possible Polymath project

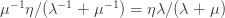

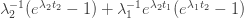

An Artin-Tits group is a group with a finite set of generators  in which every relation is of the form

in which every relation is of the form  or

or  for some positive integer

for some positive integer  , where

, where  and

and  are two of the generators. In particular, the commutation relation

are two of the generators. In particular, the commutation relation  is allowed (it is the case

is allowed (it is the case  of the first type of relation) and so is the braid relation

of the first type of relation) and so is the braid relation  (it is the case

(it is the case  of the second type of relation). This means that Artin-Tits groups include free groups, free Abelian groups, and braid groups: for example, the braid group on

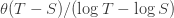

of the second type of relation). This means that Artin-Tits groups include free groups, free Abelian groups, and braid groups: for example, the braid group on  strands has a presentation with generators

strands has a presentation with generators  , where

, where  represents a twist of the

represents a twist of the  th and

th and  st strands, and relations

st strands, and relations  if

if  and

and  .

.

A few weeks ago, I asked ChatGPT for a simple example of a word problem for groups or semigroups that was not known to be decidable and also not known to be undecidable. It turns out that the word problem for many Artin-Tits groups comes into that category: the simplest example where the status is not known is the group with four generators  and

and  where

where  and

and  commute and all other pairs of generators satisfy the braid relation

commute and all other pairs of generators satisfy the braid relation  .

.

My interest in this was initially that I was looking for a toy model from which one could learn something about how mathematicians judge theorems to be interesting. I started with a remarkable semigroup discovered by G. S. Tseytin that has five generators, seven very simple relations, and an undecidable word problem. From that I created a puzzle game that you might be interested to play. Each puzzle is equivalent to an instance of the word problem for Tseytin’s semigroup, but the interface makes it much more convenient to change words using the relations than it would be to do it with pen and paper.

I was hoping that because every mathematical problem can in principle be encoded as a puzzle in this game, one might be able to build a sort of “alien mathematics”, where a theorem was an equality between words, and a definition was a decision to introduce a new (and redundant) generator g, together with a relation of the form g = w, where w is a word in the current alphabet. Theorems would be particularly interesting if they were equalities between words that could be established only by a chain of equalities that went through much longer words, and definitions would be useful if the new generators satisfied particularly concise relations (which would allow one to build “theories” within the system). I still hope to find a word problem that will allow such a project to take off, but in the end, after a lot of playing around with the game linked to above, I have decided that Tseytin’s semigroup is not suitable. The reason is that it is designed so that arbitrary instances of the word problem for groups can be encoded as word problems in this semigroup, and once one gets used to the game, one starts to see how that encoding can work. Furthermore, one seems to be driven towards the encoding — I don’t get the impression that there’s a whole other region of this semigroup to explore that has nothing to do with the kinds of words that come up in the encoding. And if that impression is correct, then one might as well start with the word problem for some group in unencoded form, or alternatively look for another semigroup. Nevertheless, I find the Tseytin game quite enjoyable: I won’t say more about it here but have written a fairly comprehensive tutorial that you can open up and read if you follow the link above.

This is perhaps the moment to say that the words “I created a puzzle game” are slightly misleading. For one thing, I discussed the idea of gamifying Tseytin’s semigroup about three years ago with Mirek Olšák, a former member of my automatic theorem proving group, and he created a basic prototype in Python. But the main point is that I do not have the programming skills to create a game that can be played in a web browser — I vibecoded it using ChatGPT.

After that experience, I thought that maybe I would have better luck if instead of looking for a group or semigroup with undecidable word problem, which might well have been explicitly designed with some encoding in mind, I looked for a word problem for which the decidability status was unknown. That way, it wouldn’t have been designed to be undecidable, but might nevertheless just happen to be undecidable and provide a nice playground of the kind I was (and still am) after. And that is what led me to the Artin-Tits groups.

However, those don’t seem to be suitable either, because it is conjectured that they all have decidable word problems. I have created a game for the Artin-Tits group mentioned above, which you can also play if you want, but I have found it very hard to create interesting puzzles. That is, I found it difficult to find words that are equivalent to the identity but that are not easily shown to be equivalent to the identity. (One nice example comes from the fact that the subgroup generated by  and

and  is isomorphic to the braid group

is isomorphic to the braid group  with four strands. The late Patrick Dehornoy found a very nice example of a braid with four strands that is equal to the identity but not in a completely trivial way. A picture of it can be found on the first page of this paper.)

with four strands. The late Patrick Dehornoy found a very nice example of a braid with four strands that is equal to the identity but not in a completely trivial way. A picture of it can be found on the first page of this paper.)

This is where a potential Polymath project comes in. An initial goal would be to determine the decidability status of this one small Artin-Tits group: if we managed that, then we could consider the more general problem. And the way I envisage approaching this initial goal is an iterative process that runs as follows.

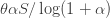

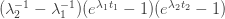

Devise an algorithm for solving word problems in the group.If the current algorithm is

for solving word problems in the group.If the current algorithm is  , then search for a puzzle

, then search for a puzzle  that

that  fails to solve.If the search is successful, then devise an algorithm

fails to solve.If the search is successful, then devise an algorithm  that solves all the puzzles that

that solves all the puzzles that  solves, and also solves

solves, and also solves  . Make

. Make  the current algorithm and return to step 2. Devising

the current algorithm and return to step 2. Devising  can be done by playing the game with puzzle

can be done by playing the game with puzzle  several times until one has the feeling that one has solved it in a systematic way.If the search is unsuccessful and the current algorithm is

several times until one has the feeling that one has solved it in a systematic way.If the search is unsuccessful and the current algorithm is  , then attempt to prove inductively that

, then attempt to prove inductively that  always succeeds: that is, to prove that if

always succeeds: that is, to prove that if  solves

solves  and

and  is obtained from

is obtained from  by applying a single relation in the group, then

by applying a single relation in the group, then  solves

solves  . (Maybe) If the inductive step doesn’t seem to work, then try to use that failure to devise a puzzle that defeats

. (Maybe) If the inductive step doesn’t seem to work, then try to use that failure to devise a puzzle that defeats  and return to step 3.

and return to step 3.I have done a couple of iterations already, with the result that I now have an algorithm — let’s call it  — that solves (basically instantaneously) every puzzle I throw at it, including the one derived from Dehornoy’s braid mentioned above. So a subgoal of the initial goal is to find a puzzle that has a solution but that

— that solves (basically instantaneously) every puzzle I throw at it, including the one derived from Dehornoy’s braid mentioned above. So a subgoal of the initial goal is to find a puzzle that has a solution but that  doesn’t solve. If we can’t, then maybe it is worth trying to prove that

doesn’t solve. If we can’t, then maybe it is worth trying to prove that  solves the word problem for this group. One comment about the algorithm

solves the word problem for this group. One comment about the algorithm  is that it never increases the length of a word, though it often does moves that preserve the length. I was told by ChatGPT that it is an unsolved problem whether every braid that is equal to the identity can be reduced to the identity without ever increasing the number of crossings. Of course that isn’t a guarantee that it really is unsolved, but if it is, then that’s another interesting problem. It also means that I would be extremely interested if someone found an example of a word in the Artin-Tits group that can be reduced to the identity but only if one starts by lengthening it.

is that it never increases the length of a word, though it often does moves that preserve the length. I was told by ChatGPT that it is an unsolved problem whether every braid that is equal to the identity can be reduced to the identity without ever increasing the number of crossings. Of course that isn’t a guarantee that it really is unsolved, but if it is, then that’s another interesting problem. It also means that I would be extremely interested if someone found an example of a word in the Artin-Tits group that can be reduced to the identity but only if one starts by lengthening it.

I’m hoping that this will be an enjoyable project for people who like both mathematics and programming. On the maths side, there is an unsolved problem to think about, and on the programming side there are lots of possibilities: for example, one could write programs that explore the space of words equal to the identity, trying to do so in such a way that there is a reasonable chance of reaching a word where it isn’t obvious how to reverse all the steps and get back to the identity. The game comes with a “sandbox” that has a few tools for generating words, but at the moment it is fairly primitive, and I would welcome suggestions for how to improve it.

It seems to me that as Polymath projects go, one can’t really lose: either we find an algorithm for the word problem, which establishes an unknown (at least according to ChatGPT) case of a decidability problem, or we find a suite of harder and harder puzzles, creating a more and more challenging and entertaining game and obtaining a deeper understanding of the Artin-Tits group in the process.

This Artin-Tits game has only a rather rudimentary and not very good tutorial created by ChatGPT. It’s easy to play once you’ve got the hang of it, and in the end I think the easiest way to get the hang of it is to watch someone else play it for a couple of minutes. I have therefore created a video tutorial, which you can find here. The video itself lasts about 25 minutes, but the tutorial part is under 10 minutes: what makes the video longer is an explanation of various features for making it easier to create puzzles, and also an explanation of how the algorithm works, which again is much easier to explain if I can demonstrate it on screen than if I have to write down some text. (I do have some text, since that is what I used as a prompt for ChatGPT to implement the algorithm, but it took a few iterations to get it working properly, so now I’m not sure what the quality of the code will be like, or even whether it is doing exactly what I want, though it appears to be.) Although I have embedded the video into this post, if you actually want to see what is going on you will probably need to watch it on YouTube using the full screen. I recommend not watching the explanation of the algorithm until you have played the game a few times first. Also, I should warn you that the games in the Advanced category are not all soluble, but games 1, 5 and 6 definitely are. Another thing I forgot to say on the video is that if you want you can “rotate” a word by clicking on one of its letters and dragging it to the right or left. If the word is equal to the identity, then its rotations will be as well, so this is a valid move. There is also a button for disabling this rotation facility if you want the puzzle to be slightly more challenging.

A quick note about the availability of the two games. They are hosted on the Netlify platform. I am on their free plan, which gives me a certain number of “credits” each month. I’m not sure how quickly these will run out, largely because I have no idea how many people will actually think it interesting to play the games. If they do run out, then the games will cease to be available until the credits are renewed, which for me happens on the 10th of each month. If this has happened and you are keen to play one of the games, another option is to download the html files and open them in your browser. Here is a link to the Artin-Tits game and here is one to the Tseytin game. If you are feeling particularly public-spirited, especially if you think you will play quite a lot, then you might consider doing that anyway, so that the Netlify credits run down more slowly. If the running out of the credits is quick enough for all this to be a real issue, then I may move to a paid plan.

I’ll finish with a quick tip for playing the Artin-Tits game, which I mentioned on the video but perhaps didn’t stress enough. Many of the moves consist in selecting three consecutive letters and using a relation of the form  to change them. Easy examples of this are replacement rules such as

to change them. Easy examples of this are replacement rules such as  . But what if inverses are involved? I’ll represent inverses of generators with upper-case letters, so for example

. But what if inverses are involved? I’ll represent inverses of generators with upper-case letters, so for example  represents

represents  , which in the game would be a white

, which in the game would be a white  followed by a white

followed by a white  followed by a black

followed by a black  . The word

. The word  turns out to equal

turns out to equal  . To remember this, a simple rule is that two letters of the same colour can be bracketed together and “pushed past” the third letter, which retains its colour but changes its value. Here, for example, we write

. To remember this, a simple rule is that two letters of the same colour can be bracketed together and “pushed past” the third letter, which retains its colour but changes its value. Here, for example, we write  as

as  and then swap them over, changing

and then swap them over, changing  into

into  in the process, getting

in the process, getting  , or

, or  without the brackets. In group theoretic terms, this is of course saying that

without the brackets. In group theoretic terms, this is of course saying that  and

and  are conjugates, and that

are conjugates, and that  is what conjugates one to the other. But when playing the game it is convenient to remember it by thinking that when you see a subword such as

is what conjugates one to the other. But when playing the game it is convenient to remember it by thinking that when you see a subword such as  , you can push the

, you can push the  (and in particular the

(and in particular the  ) to the left, getting

) to the left, getting  .

.

September 22, 2025

Creating a database of motivated proofs

It’s been over three years since my last post on this blog and I have sometimes been asked, understandably, whether the project I announced in my previous post was actually happening. The answer is yes — the grant I received from the Astera Institute has funded several PhD students and a couple of postdocs, and we have been busy. In my previous post I suggested that I would be open to remote collaboration, but that has happened much less, partly because a Polymath-style approach would have been difficult to manage while also ensuring that my PhD students would have work that they could call their own to put in their theses.

In general I don’t see a satisfactory solution to that problem, but in this post I want to mention a subproject of the main project that is very much intended to be a large public collaboration. A few months ago, a call came out from Renaissance Philanthropies saying that they were launching a $9m AI for Math Fund to spend on projects in the general sphere of AI and mathematics, and inviting proposals. One of the categories that they specifically mentioned was creating new databases, and my group submitted a proposal to create a database of what we call “structured motivated proofs,” a piece of terminology that I will explain a bit more later in just a moment. I am happy to report that our proposal was one of the 29 successful ones. Since a good outcome to the project will depend on collaboration from many people outside the group, we need to publicize it, which is precisely the purpose of this post. Below I will be more specific about the kind of help we are looking for.

Why might yet another database of theorems and proofs be useful?The underlying thought behind this project is that AI for mathematics is being held back not so much by an insufficient quantity of data as by the wrong kind of data. All mathematicians know, and some of us enjoy complaining about it, that it is common practice when presenting a proof in a mathematics paper or even textbook, to hide the thought processes that led to the proof. Often this does not matter too much, because the thought processes may be standard ones that do not need to be spelt out to the intended audience. But when proofs start to get longer and more difficult, they can be hard to read because one has to absorb definitions and lemma statements that are not obviously useful, are presented as if they appeared from nowhere, and demonstrate their utility only much later in the argument.

A sign that this is a problem for AI is the behaviour one observes after asking an LLM to prove a statement that is too difficult for it. Very often, instead of admitting defeat, it will imitate the style of a typical mathematics paper and produce rabbits out of hats, together with arguments later on that those rabbits do the required job. The problem is that, unlike with a correct mathematics paper, when one scrutinizes the arguments carefully, one finds that they are wrong. However, it is hard to distinguish between an incorrect rabbit and an incorrect argument justifying that rabbit (especially if the argument does not go into full detail) and a correct one, so the kinds of statistical methods used by LLMs do not have an easy way to penalize the incorrectness.

Of course, that does not mean that LLMs cannot do mathematics at all — they are remarkably good at it, at least compared with what I would have expected three years ago. How can that be, given the problem I have discussed in the previous paragraph?

The way I see it (which could change — things move so fast in this sphere), the data that is currently available to train LLMs and other systems is very suitable for a certain way of doing mathematics that I call guess and check. The rough idea is that you do routine parts of an argument without any fuss (and an LLM can do them too because it has seen plenty of similar examples), but if the problem as a whole is not routine, then at some point you have to stop and think, often because you need to construct an object that has certain properties (I mean this in a rather general way — the “object” might be a lemma that will split up the proof in a nice way) and it is not obvious how to do so. The guess-and-check approach to such moments is what it says: you make as intelligent a guess as you can and then see whether it has the properties you wanted. If it doesn’t, you make another guess, and you keep going until you get lucky.

The reason an LLM might be tempted to use this kind of approach is that the style of mathematical writing I described above makes it look as though that is what we as mathematicians do. Of course, we don’t do that, but we often omit to mention all the failed guesses we made and how we carefully examined why they failed, modifying them in appropriate ways in response, until we finally converged on an object that worked. We also don’t mention the reasoning that often takes place before we make the guess, saying to ourselves things like “Clearly an Abelian group can’t have that property, so I need to look for a non-Abelian group.”

Intelligent guess and check works well a lot of the time, particularly when carried out by an LLM that has seen many proofs of many theorems. I have often been surprised when I have asked an LLM a problem of the form  , where

, where  is some property that is hard to satisfy, and the LLM has had no trouble answering it. But somehow when this happens, the flavour of the answer given by the LLM leaves me with the impression that the technique it has used to construct

is some property that is hard to satisfy, and the LLM has had no trouble answering it. But somehow when this happens, the flavour of the answer given by the LLM leaves me with the impression that the technique it has used to construct  is one that it has seen before and regards as standard.

is one that it has seen before and regards as standard.

If the above picture of what LLMs can do is correct (the considerations for reinforcement-learning-based systems such as AlphaProof are not identical but I think that much of what I say in this post applies to them too for slightly different reasons), then the likely consequence is that if we pursue current approaches, then we will reach a plateau: broadly speaking they will be very good at answering a question if it is the kind of question that a mathematician with the right domain expertise and good instincts would find reasonably straightforward, but will struggle with anything that is not of that kind. In particular, they will struggle with research-level problems, which are, almost by definition, problems that experts in the area do not find straightforward. (Of course, there would probably be cases where an LLM spots relatively easy arguments that the experts had missed, but that wouldn’t fundamentally alter the fact that they weren’t really capable of doing research-level mathematics.)

But what if we had a database of theorems and proofs that did not hide the thought processes that lay behind the non-obvious details of the proofs? If we could train AI on a database of accounts of proof discoveries and if, having done so, we then asked it to provide similar accounts, then it would no longer resort to guess-and-check when it got stuck, because the proof-discovery accounts it had been trained on would not be resorting to it. There could be a problem getting it to unlearn its bad habits, but I don’t think that difficulty would be impossible to surmount.

The next question is what such a database might look like. One could just invite people to send in stream-of-consciousness accounts of how they themselves found certain proofs, but that option is unsatisfactory for several reasons.

It can be very hard to remember where an idea came from, even a few seconds after one has had it — in that respect it is like a dream, the memory of which becomes rapidly less vivid as one wakes up. Often an idea will seem fairly obvious to one person but not to another.The phrase “motivated proof” means different things to different people, so without a lot of careful moderation and curation of entries, there is a risk that a database would be disorganized and not much more helpful than a database of conventionally written proofs. A stream-of-consciousness account could end up being a bit too much about the person who finds the proof and not enough about the mathematical reasons for the proof being feasibly discoverable.To deal with these kinds of difficulties, we plan to introduce a notion of a structured motivated proof, by which we mean a proof that is generated in a very particular way that I will partially describe below. A major part of the project, and part of the reason we needed funding for it, is to create a platform that will make it convenient to input structured motivated proofs and difficult to insert the kinds of rabbits out of hats that make a proof mysterious and unmotivated. In this way we hope to gamify the task of creating the database, challenging people to input into our system proofs of certain theorems that appear to rely on “magic” ideas, and perhaps even offering prizes for proofs that contain steps that appear in advance to be particularly hard to motivate. (An example: the solution by Ellenberg and Gijswijt of the cap-set problem uses polynomials in a magic-seeming way. The idea of using polynomials came from an earlier paper of Croot, Lev and Pach that proved a closely related theorem, but in that paper it just appears in the statement of their Lemma 1, with no prior discussion apart from the words “in the present paper we use the polynomial method” in the introduction.)

What is a structured motivated proof?I wrote about motivated proofs in my previous post, but thanks to many discussions with other members of the group, my ideas have developed quite a lot since then. Here are two ways we like to think about the concept.

1. A structured motivated proof is one that is generated by standard moves.I will not go into full detail about what I mean by this, but will do so in a future post when we have created the platform that we would like people to use in order to input proofs into the database. But the basic idea is that at any one moment one is in a certain state, which we call a proof-discovery state, and there will be a set of possible moves that can take one from the current proof-discovery state to a new one.

A proof-discovery state is supposed to be a more formal representation of the state one is in when in the middle of solving a problem. Typically, if the problem is difficult, one will have asked a number of questions, and will be aware of logical relationships between them: for example, one might know that a positive answer to Q1 could be used to create a counterexample to Q2, or that Q3 is a special case of Q4, and so on. One will also have proved some results connected with the original question, and again these results will be related to each other and to the original problem in various ways that might be quite complicated: for example P1 might be a special case of Q2, which, if true would reduce Q3 to Q4, where Q3 is a generalization of the statement we are trying to prove.

Typically we will be focusing on one of the questions, and typically that question will take the form of some hypotheses and a target (the question being whether the hypotheses imply the target). One kind of move we might make is a standard logical move such as forwards or backwards reasoning: for example, if we have hypotheses of the form  and

and  , then we might decide to deduce

, then we might decide to deduce  . But things get more interesting when we consider slightly less basic actions we might take. Here are three examples.

. But things get more interesting when we consider slightly less basic actions we might take. Here are three examples.

is given by the formula

is given by the formula  , where

, where  is a polynomial, and our goal is to prove that there exists

is a polynomial, and our goal is to prove that there exists  such that

such that  . Without really thinking about it, we are conscious that

. Without really thinking about it, we are conscious that  is a composition of two functions, one of which is continuous and one of which belongs to a class of functions that are all continuous, so

is a composition of two functions, one of which is continuous and one of which belongs to a class of functions that are all continuous, so  is continuous. Also, the conclusion

is continuous. Also, the conclusion  matches well the conclusion of the intermediate-value theorem. So the intermediate-value theorem comes naturally to mind and we add it to our list of available hypotheses. In practice we wouldn’t necessarily write it down, but the system we wish to develop is intended to model not just what we write down but also what is going on in our brains, so we propose a move that we call library extraction (closely related to what is often called premise selection in the literature). Note that we have to be a bit careful about library extraction, since we don’t want the system to be allowed to call up results from the library that appear to be irrelevant but then magically turn out to be helpful, since those would count as rabbits out of hats. So we want to allow extraction of results only if they are obvious given the context. It is not easy to define what “obvious” means, but there is a good rule of thumb for it: a library extraction is obvious if it is one of the first things ChatGPT thinks of when given a suitable non-cheating prompt. For example, I gave it the prompt, “I have a function

matches well the conclusion of the intermediate-value theorem. So the intermediate-value theorem comes naturally to mind and we add it to our list of available hypotheses. In practice we wouldn’t necessarily write it down, but the system we wish to develop is intended to model not just what we write down but also what is going on in our brains, so we propose a move that we call library extraction (closely related to what is often called premise selection in the literature). Note that we have to be a bit careful about library extraction, since we don’t want the system to be allowed to call up results from the library that appear to be irrelevant but then magically turn out to be helpful, since those would count as rabbits out of hats. So we want to allow extraction of results only if they are obvious given the context. It is not easy to define what “obvious” means, but there is a good rule of thumb for it: a library extraction is obvious if it is one of the first things ChatGPT thinks of when given a suitable non-cheating prompt. For example, I gave it the prompt, “I have a function  from the reals to the reals and I want to prove that there exists some

from the reals to the reals and I want to prove that there exists some  such that

such that  . Can you suggest any results that might be helpful?” and the intermediate-value theorem was its second suggestion. (Note that I had not even told it that

. Can you suggest any results that might be helpful?” and the intermediate-value theorem was its second suggestion. (Note that I had not even told it that  was continuous, so I did not need to make that particular observation before coming up with the prompt.) We have a goal of the form

was continuous, so I did not need to make that particular observation before coming up with the prompt.) We have a goal of the form  . If this were a Lean proof state, the most common way to discharge a goal of this form would be to input a choice for

. If this were a Lean proof state, the most common way to discharge a goal of this form would be to input a choice for  . That is, we would instantiate the existential quantifier with some

. That is, we would instantiate the existential quantifier with some  and our new goal would be

and our new goal would be  . However, as with library extraction, we have to be very careful about instantiation if we want our proof to be motivated, since we wish to disallow highly surprising choices of

. However, as with library extraction, we have to be very careful about instantiation if we want our proof to be motivated, since we wish to disallow highly surprising choices of  that can be found only after a long process of thought. So we have to restrict ourselves to obvious instantiations. Roughly speaking, what will count as an obvious instantiation in our system is instantiation with a term that is already present in the proof-discovery state. If no suitable term is present, then in order to continue with the proof, it will be necessary to carry out moves that generate a suitable term. A very common technique for this is the use of metavariables: instead of guessing a suitable

that can be found only after a long process of thought. So we have to restrict ourselves to obvious instantiations. Roughly speaking, what will count as an obvious instantiation in our system is instantiation with a term that is already present in the proof-discovery state. If no suitable term is present, then in order to continue with the proof, it will be necessary to carry out moves that generate a suitable term. A very common technique for this is the use of metavariables: instead of guessing a suitable  , we create a variable

, we create a variable  and change the goal to

and change the goal to  , which we can think of as saying “I’m going to start trying to prove

, which we can think of as saying “I’m going to start trying to prove  even though I haven’t chosen

even though I haven’t chosen  yet. As the attempted proof proceeds, I will note down any properties

yet. As the attempted proof proceeds, I will note down any properties  that

that  might have that would help me finish the proof, in the hope that (i) I get to the end and (ii) the problem

might have that would help me finish the proof, in the hope that (i) I get to the end and (ii) the problem  is easier than the original problem.” We cannot see how to answer the question we are focusing on so we ask a related question. Two very common kinds of related question (as emphasized by Polya) are generalization and specialization. Perhaps we don’t see why a hypothesis is helpful, so we see whether the result holds if we drop that hypothesis. If it does, then we are no longer distracted by an irrelevant hypothesis. If it does not, then we can hope to find a counterexample that will help us understand how to use the hypothesis. Or perhaps we are trying to prove a general statement but it is not clear how to do so, so instead we formulate some special cases, hoping that we can prove them and spot features of the proofs that we can generalize. Again we have to be rather careful here not to allow “non-obvious” generalizations and specializations. Roughly the idea there is that a generalization should be purely logical — for example, dropping a hypothesis is fine but replacing the hypothesis “

is easier than the original problem.” We cannot see how to answer the question we are focusing on so we ask a related question. Two very common kinds of related question (as emphasized by Polya) are generalization and specialization. Perhaps we don’t see why a hypothesis is helpful, so we see whether the result holds if we drop that hypothesis. If it does, then we are no longer distracted by an irrelevant hypothesis. If it does not, then we can hope to find a counterexample that will help us understand how to use the hypothesis. Or perhaps we are trying to prove a general statement but it is not clear how to do so, so instead we formulate some special cases, hoping that we can prove them and spot features of the proofs that we can generalize. Again we have to be rather careful here not to allow “non-obvious” generalizations and specializations. Roughly the idea there is that a generalization should be purely logical — for example, dropping a hypothesis is fine but replacing the hypothesis “ is twice differentiable” by “

is twice differentiable” by “ is upper semicontinuous” is not — and that a specialization should be to a special case that is “extreme” in some way — one tries the smallest or largest or obviously simplest example that has not yet been investigated. 2. A structured motivated proof is one that can be generated with the help of a point-and-click system.

is upper semicontinuous” is not — and that a specialization should be to a special case that is “extreme” in some way — one tries the smallest or largest or obviously simplest example that has not yet been investigated. 2. A structured motivated proof is one that can be generated with the help of a point-and-click system.This is a surprisingly useful way to conceive of what we are talking about, especially as it relates closely to what I was talking about earlier: imposing a standard form on motivated proofs (which is why we call them “structured” motivated proofs) and gamifying the process of producing them.

The idea is that a structured motivated proof is one that can be generated using an interface (which we are in the process of creating — at the moment we have a very basic prototype that has a few of the features we will need, but not yet the more interesting ones) that has one essential property: the user cannot type in data. So what can they do? They can select text that is on their screen (typically mathematical expressions or subexpressions), they can click buttons, choose items from drop-down menus, and accept or reject “obvious” suggestions made to them by the interface.

If, for example, the current goal is an existential statement  , then typing in a formula that defines a suitable

, then typing in a formula that defines a suitable  is not possible, so instead one must select text or generate new text by clicking buttons, choosing from short drop-down menus, and so on. This forces the user to generate

is not possible, so instead one must select text or generate new text by clicking buttons, choosing from short drop-down menus, and so on. This forces the user to generate  , which is our proxy for showing where the idea of using

, which is our proxy for showing where the idea of using  came from.

came from.

Broadly speaking, the way the prototype works is to get an LLM to read a JSON object that describes the variables, hypotheses and goals involved in the proof state in a structured format, and to describe (by means of a fairly long prompt) the various moves it might be called upon to do. Thus, the proofs generated by the system are not formally verified, but that is not an issue that concerns us in practice since there will be a human in the loop throughout to catch any mistakes that the LLM might make, and this flexibility may even work to our advantage to better capture the fluidity of natural-language mathematics.

There is obviously a lot more to say about what the proof-generating moves are, or (approximately equivalently) what the options provided by a point-and-click system will be. I plan to discuss that in much more detail when we are closer to having an interface ready, the target for which is the end of this calendar year. But the aim of the project is to create a database of examples of proofs that have been successfully generated using the interface, which can then be used to train AI to play the generate-structured-motivated-proof game.

How to get involved.There are several tasks that will need doing once the project gets properly under way. Here are some of the likely ones.

The most important is for people to submit structured motivated (or move-generated) proofs to us on the platform we provide. We hope that the database will end up containing proofs of a wide range of difficulty (of two kinds — there might be fairly easy arguments that are hard to motivate and there might be arguments that are harder to follow but easier to motivate) and also a wide range of areas of mathematics. Our initial target, which is quite ambitious, is to have around 1000 entries by two years from now. While we are not in a position to accept entries yet, if you are interested in participating, then it is not too early to start thinking in a less formal way about how to convert some of your favourite proofs into motivated versions, since that will undoubtedly make it easier to get them accepted by our platform when it is ready.We are in the process of designing the platform. As I mentioned earlier, we already have a prototype, but there are many moves we will need it to be able to do that it cannot currently do. For example, the current prototype allows just a single proof state, which consists of some variable declarations, hypotheses, and goals. It does not yet support creating subsidiary proof states (which we would need if we wanted to allow the user to consider generalizations and specializations, for example). Also, for the moment the prototype gets an LLM to implement all moves, but some of the moves, such as applying modus ponens, are extremely mechanical and would be better done using a conventional program. (On the other hand, moves such as “obvious library extraction” are better done by an LLM.) Thirdly, a technical problem is that LaTeX is currently rendered as images, which makes it hard to select subexpressions, something we will need to be able to do in a non-clunky way. And the public version of the platform will need to be web-based and very convenient to use. We will want features such as being able to zoom out and look at some kind of dependency diagram of all the statements and questions currently in play, and then zoom in on various nodes if the user wishes to work on them. If you think you may be able (and willing) to help with some of these aspects of the platform, then we would be very happy to hear from you. For some, it would probably help to have a familiarity with proof assistants, while for others we would be looking for somebody with software engineering experience. The grant from the AI for Math Fund will allow us to pay for some of this help, at rates to be negotiated. We are not yet ready to specify in detail what help we need, but would welcome any initial expressions of interest. Once the platform is ready and people start to submit proofs, it is likely that, at least to start with, they will find that the platform does not always provide the moves they need. Perhaps they will have a very convincing account of where a non-obvious idea in the proof came from, but the system won’t be expressive enough for them to translate that account into a sequence of proof-generating moves. We will want to be able to react to such situations (if we agree that a new move is needed) by expanding the capacity of the platform. It will therefore be very helpful if people sign up to be beta-testers, so that we can try to get the platform to a reasonably stable state before opening it up to a wider public. Of course, to be a beta-tester you would need to have a few motivated proofs in mind. It is not obvious that every proof submitted via the platform, even if submitted successfully, would be a useful addition to the database. For instance, it might be such a routine argument that no idea really needs to have its origin explained. Or it might be that, despite our best efforts, somebody finds a way of sneaking in a rabbit while using only the moves that we have provided. (One way this could happen is if an LLM made a highly non-obvious suggestion that happened to work, in which case the rule of thumb that if an LLM thinks of it, it must be obvious, would have failed in that instance.) For this reason, we envisage having a team of moderators, who will check entries and make sure that they are good additions to the database. We hope that this will be an enjoyable task, but it may have its tedious aspects, so we envisage paying moderators — again, this expense was allowed for in our proposal to the AI for Math Fund.If you think you might be interested in any of these roles, please feel free to get in touch. Probably the hardest recruitment task for us will be identifying the right people with the right mixture of to help us turn the platform into a well-designed web-based one that is convenient and pleasurable to use. If you think you might be such a person, or if you have a good idea for how we should go about finding one, we would be particularly interested to hear from you.

In a future post, I will say more about the kinds of moves that our platform will allow, and will give examples of non-motivated proofs together with how motivated versions of those proofs can be found, and entered using the platform (which may involve a certain amount of speculation about what the platform will end up looking like).

How does this relate to use of tactics in a proof assistant?In one way, our “moves” can be regarded as tactics of a kind. However, some of the moves we will need are difficult to implement in conventional proof assistants such as Lean. In parallel with the work described above, we hope to create an interface to Lean that would allow one to carry out proof-discovery moves of the kind discussed above but with the proof-discovery states being collections of Lean proof states. Members of my group have already been working on this and have made some very interesting progress, but there is some way to go. However, we hope that at some point (and this is also part of the project pitched to the AI for Math Fund) that we will have created another interface that will have Lean working in the background, so that it will be possible to generate motivated proofs that will be (or perhaps it is better to say include) proofs in Lean at the same time.

Another possibility that we are also considering is to use the output of the first platform (which, as mentioned above, will be fairly formal, but not in the strict sense of a language such as Lean) to create a kind of blueprint that can then be autoformalized automatically. Then we would have a platform that would in principle allow mathematicians to search for proofs while working on their computers, without having to learn a formal language, and with their thoughts being formalized as they go.

April 28, 2022

Announcing an automatic theorem proving project

I am very happy to say that I have recently received a generous grant from the Astera Institute to set up a small group to work on automatic theorem proving, in the first instance for about three years after which we will take stock and see whether it is worth continuing. This will enable me to take on up to about three PhD students and two postdocs over the next couple of years. I am imagining that two of the PhD students will start next October and that at least one of the postdocs will start as soon as is convenient for them. Before any of these positions are advertised, I welcome any informal expressions of interest: in the first instance you should email me, and maybe I will set up Zoom meetings. (I have no idea what the level of demand is likely to be, so exactly how I respond to emails of this kind will depend on how many of them there are.)

I have privately let a few people know about this, and as a result I know of a handful of people who are already in Cambridge and are keen to participate. So I am expecting the core team working on the project to consist of 6-10 people. But I also plan to work in as open a way as possible, in the hope that people who want to can participate in the project remotely even if they are not part of the group that is based physically in Cambridge. Thus, part of the plan is to report regularly and publicly on what we are thinking about, what problems, both technical and more fundamental, are holding us up, and what progress we make. Also, my plan at this stage is that any software associated with the project will be open source, and that if people want to use ideas generated by the project to incorporate into their own theorem-proving programs, I will very much welcome that.

I have written a 54-page document that explains in considerable detail what the aims and approach of the project will be. I would urge anyone who thinks they might want to apply for one of the positions to read it first — not necessarily every single page, but enough to get a proper understanding of what the project is about. Here I will explain much more briefly what it will be trying to do, and what will set it apart from various other enterprises that are going on at the moment.

In brief, the approach taken will be what is often referred to as a GOFAI approach, where GOFAI stands for “good old-fashioned artificial intelligence”. Roughly speaking, the GOFAI approach to artificial intelligence is to try to understand as well as possible how humans achieve a particular task, and eventually reach a level of understanding that enables one to program a computer to do the same.

As the phrase “old-fashioned” suggests, GOFAI has fallen out of favour in recent years, and some of the reasons for that are good ones. One reason is that after initial optimism, progress with that approach stalled in many domains of AI. Another is that with the rise of machine learning it has become clear that for many tasks, especially pattern-recognition tasks, it is possible to program a computer to do them very well without having a good understanding of how humans do them. For example, we may find it very difficult to write down a set of rules that distinguishes between an array of pixels that represents a dog and an array of pixels that represents a cat, but we can still train a neural network to do the job.

However, while machine learning has made huge strides in many domains, it still has several areas of weakness that are very important when one is doing mathematics. Here are a few of them.

In general, tasks that involve reasoning in an essential way. Learning to do one task and then using that ability to do another. Learning based on just a small number of examples. Common sense reasoning.Anything that involves genuine understanding (even if it may be hard to give a precise definition of what understanding is) as opposed to sophisticated mimicry.Obviously, researchers in machine learning are working in all these areas, and there may well be progress over the next few years [in fact, there has been progress on some of these difficulties already of which I was unaware — see some of the comments below], but for the time being there are still significant limitations to what machine learning can do. (Two people who have written very interestingly on these limitations are Melanie Mitchell and François Chollet.)

That said, using machine learning techniques in automatic theorem proving is a very active area of research at the moment. (Two names you might like to look up if you want to find out about this area are Christian Szegedy and Josef Urban.) The project I am starting will not be a machine-learning project, but I think there is plenty of potential for combining machine learning with GOFAI ideas — for example, one might use GOFAI to reduce the options for what the computer will do next to a small set and use machine learning to choose the option out of that small set — so I do not rule out some kind of wider collaboration once the project has got going.

Another area that is thriving at the moment is formalization. Over the last few years, several major theorems and definitions have been fully formalized that would have previously seemed out of reach — examples include Gödel’s theorem, the four-colour theorem, Hales’s proof of the Kepler conjecture, Thompson’s odd-order theorem, and a lemma of Dustin Clausen and Peter Scholze with a proof that was too complicated for them to be able to feel fully confident that it was correct. That last formalization was carried out in Lean by a team led by Johan Commelin, which is part of the more general and exciting Lean group spearheaded by Kevin Buzzard.

As with machine learning, I mention formalization in order to contrast it with the project I am announcing here. It may seem slightly negative to focus on what it will not be doing, but I feel it is important, because I do not want to attract applications from people who have an incorrect picture of what they would be doing. Also as with machine learning, I would welcome and even expect collaboration with the Lean group. For us it would be potentially very interesting to make use of the Lean database of results, and it would also be nice (even if not essential) to have output that is formalized using a standard system. And we might be able to contribute to the Lean enterprise by creating code that performs steps automatically that are currently done by hand. A very interesting looking new institute, the Hoskinson Center for Formal Mathematics, has recently been set up with Jeremy Avigad at the helm, which will almost certainly make such collaborations easier.

But now let me turn to the kinds of things I hope this project will do.

Why is mathematics easy?Ever since Turing, we have known that there is no algorithm that will take as input a mathematical statement and output a proof if the statement has a proof or the words “this statement does not have a proof” otherwise. (If such an algorithm existed, one could feed it statements of the form “algorithm A halts” and the halting problem would be solved.) If  , then there is not even a practical algorithm for determining whether a statement has a proof of at most some given length — a brute-force algorithm exists, but takes far too long. Despite this, mathematicians regularly find long and complicated proofs of theorems. How is this possible?

, then there is not even a practical algorithm for determining whether a statement has a proof of at most some given length — a brute-force algorithm exists, but takes far too long. Despite this, mathematicians regularly find long and complicated proofs of theorems. How is this possible?

The broad answer is that while the theoretical results just alluded to show that we cannot expect to determine the proof status of arbitrary mathematical statements, that is not what we try to do. Rather, we look at only a tiny fraction of well-formed statements, and the kinds of proofs we find tend to have a lot more structure than is guaranteed by the formal definition of a proof as a sequence of statements, each of which is either an initial assumption or follows in a simple way from earlier statements. (It is interesting to speculate about whether there are, out there, utterly bizarre and idea-free proofs that just happen to establish concise mathematical statements but that will never be discovered because searching for them would take too long.) A good way of thinking about this project is that it will be focused on the following question.

Question. What is it about the proofs that mathematicians actually find that makes them findable in a reasonable amount of time?

Clearly, a good answer to this question would be extremely useful for the purposes of writing automatic theorem proving programs. Equally, any advances in a GOFAI approach to writing automatic theorem proving programs have the potential to feed into an answer to the question. I don’t have strong views about the right balance between the theoretical and practical sides of the project, but I do feel strongly that both sides should be major components of it.

The practical side of the project will, at least to start with, be focused on devising algorithms that find proofs in a way that imitates as closely as possible how humans find them. One important aspect of this is that I will not be satisfied with programs that find proofs after carrying out large searches, even if those searches are small enough to be feasible. More precisely, searches will be strongly discouraged unless human mathematicians would also need to do them. A question that is closely related to the question above is the following, which all researchers in automatic theorem proving have to grapple with.

Question. Humans seem to be able to find proofs with a remarkably small amount of backtracking. How do they prune the search tree to such an extent?

Allowing a program to carry out searches of “silly” options that humans would never do is running away from this absolutely central question.

With Mohan Ganesalingam, Ed Ayers and Bhavik Mehta (but not simultaneously), I have over the years worked on writing theorem-proving programs with as little search as possible. This will provide a starting point for the project. One of the reasons I am excited to have the chance to set up a group is that I have felt for a long time that with more people working on the project, there is a chance of much more rapid progress — I think the progress will scale up more than linearly in the number of people, at least up to a certain size. And if others were involved round the world, I don’t think it is unreasonable to hope that within a few years there could be theorem-proving programs that were genuinely useful — not necessarily at a research level but at least at the level of a first-year undergraduate. (To be useful a program does not have to be able to solve every problem put in front of it: even a program that could solve only fairly easy problems but in a sufficiently human way that it could explain how it came up with its proofs could be a very valuable educational tool.)

A more distant dream is of course to get automatic theorem provers to the point where they can solve genuinely difficult problems. Something else that I would like to see coming out of this project is a serious study of how humans do this. From time to time I have looked at specific proofs that appear to require at a certain point an idea that comes out of nowhere, and after thinking very hard about them I have eventually managed to present a plausible account of how somebody might have had the idea, which I think of as a “justified proof”. I would love it if there were a large collection of such accounts, and I have it in mind as a possible idea to set up (with help) a repository for them, though I would need to think rather hard about how best to do it. One of the difficulties is that whereas there is widespread agreement about what constitutes a proof, there is not such a clear consensus about what constitutes a convincing explanation of where an idea comes from. Another theoretical problem that interests me a lot is the following.

Problem. Come up with a precise definition of a “proof justification”.

Though I do not have a satisfactory definition, very recently I have had some ideas that will I think help to narrow down the search substantially. I am writing these ideas down and hope to make them public soon.

Who am I looking for?There is much more I could say about the project, but if this whets your appetite, then I refer you to the document where I have said much more about it. For the rest of this post I will say a little bit more about the kind of person I am looking for and how a typical week might be spent.

The most important quality I am looking for in an applicant for a PhD or postdoc associated with this project is a genuine enthusiasm for the GOFAI approach briefly outlined here and explained in more detail in the much longer document. If you read that document and think that that is the kind of work you would love to do and would be capable of doing, then that is a very good sign. Throughout the document I give indications of things that I don’t yet know how to do. If you find yourself immediately drawn into thinking about those problems, which range from small technical problems to big theoretical questions such as the ones mentioned above, then that is even better. And if you are not fully satisfied with a proof unless you can see why it was natural for somebody to think of it, then that is better still.

I would expect a significant proportion of people reading the document to have an instinctive reaction that the way I propose to attack the problems is not the best way, and that surely one should use some other technique — machine learning, large search, the Knuth-Bendix algorithm, a computer algebra package, etc. etc. — instead. If that is your reaction, then the project probably isn’t a good fit for you, as the GOFAI approach is what it is all about.

As far as qualifications are concerned, I think the ideal candidate is somebody with plenty of experience of solving mathematical problems (either challenging undergraduate-level problems for a PhD candidate or research-level problems for a postdoc candidate), and good programming ability. But if I had to choose one of the two, I would pick the former over the latter, provided that I could be convinced that a candidate had a deep understanding of what a well-designed algorithm would look like. (I myself am not a fluent programmer — I have some experience of Haskell and Python and I think a pretty good feel for how to specify an algorithm in a way that makes it implementable by somebody who is a quick coder, and in my collaborations so far have relied on my collaborators to do the coding.) Part of the reason for that is that I hope that if one of the outputs of the group is detailed algorithm designs, then there will be remote participants who would enjoy turning those designs into code.

How will the work be organized?The core group is meant to be a genuine team rather than simply a group of a few individuals with a common interest in automatic theorem proving. To this end, I plan that the members of the group will meet regularly — I imagine something like twice a week for at least two hours and possibly more — and will keep in close contact, and very likely meet less formally outside those meetings. The purpose of the meetings will be to keep the project appropriately focused. That is not to say that all team members will work on the same narrow aspect of the problem at the same time. However, I think that with a project like this it will be beneficial (i) to share ideas frequently, (ii) to keep thinking strategically about how to get the maximum expected benefit for the effort put in , and (iii) to keep updating our public list of open questions (which will not be open questions in the usual mathematical sense, but questions more like “How should a computer do this?” or “Why is it so obvious to a human mathematician that this would be a silly thing to try?”).

In order to make it easy for people to participate remotely, I think probably we will want to set up a dedicated website where people can post thoughts, links to code, questions, and so on. Some thought will clearly need to go into how best to design such a site, and help may be needed to build it, which if necessary I could pay for. Another possibility would of course be to have Zoom meetings, but whether or not I would want to do that depends somewhat on who ends up participating and how they do so.

Since the early days of Polymath I have become much more conscious that merely stating that a project is open to anybody who wishes to join in does not automatically make it so. For example, whereas I myself am comfortable with publicly suggesting a mathematical idea that turns out on further reflection to be fruitless or even wrong, many people are, for very good reasons, not willing to do so, and those people belong disproportionately to groups that have been historically marginalized from mathematics — which of course is not a coincidence. Because of this, I have not yet decided on the details of how remote participation might work. Maybe part of it could be fully open in the way that Polymath was, but part of it could be more private and carefully moderated. Or perhaps separate groups could be set up that communicated regularly with the Cambridge group. There are many possibilities, but which ones would work best depends on who is interested. If you are interested in the project but would feel excluded by certain modes of participation, then please get in touch with me and we can think about what would work for you.

January 30, 2021

Leicester mathematics under threat again

Four years ago I wrote a post about an awful plan by Leicester University to sack its entire mathematics department, invite them to reapply for their jobs, and rehire all but six “lowest performers”. Fortunately, after an outcry, the university backed down.

Alas, now there’s a new vice-chancellor who appears to have learned nothing from the previous debacle. This time, the plan, known by the nice fluffy name Shaping for Excellence, is to get rid of research in certain subjects of which pure mathematics is one (and medieval literature another). This would mean making all eight pure mathematicians at Leicester redundant. The story is spreading rapidly on social media (it’s attracted quite a bit of attention on Twitter, Reddit and Hacker News, for example), so I won’t write a long post. But just in case you haven’t heard about it, here’s a link to a petition you can sign if, like a lot of other people, you feel strongly that this is a bad decision. (At the time of writing, it has been signed by about 2,500 people, many of them very well known academics in the areas that Leicester University claims to be intending to promote, in well under 24 hours.)

May 20, 2020

Mathematical Research Reports: a “new” mathematics journal is launched

From time to time academic journals undergo an interesting process of fission. Typically as a result of some serious dissatisfaction, the editorial board resigns en masse to set up a new journal, the publishers of the original journal build a new editorial board from scratch, and the result is two journals, one inheriting the editors and collective memory of the original journal, and the other keeping the name and the publisher. Which is the “true” successor? In practice it tends to be the one with the editors, with its sibling surviving as a zombie journal that is the successor in name only. Perhaps there are examples that go the other way, and there may be examples where both journals go on to thrive, but I have not looked closely at the examples I know about.

I’m mentioning this because recently I have been involved in a rather unusual example of this phenomenon. Most cases I know of are the result either of frustration with the practices of the big commercial publishers or of malpractice by an editor-in-chief. But this was an open access journal with no publication charges, and with an extremely efficient and impeccably behaved editor-in-chief. So what was the problem?

The journal started out in 1995 as Electronic Research Announcements of the AMS, or ERA-AMS for short. It was still called that when I first joined the editorial board. Its editor-in-chief was Svetlana Katok, who did a great job, and there was a high-powered editorial board. As its name suggests, it specialized in shortish papers announcing results that would then appear with more details in significantly longer papers, so it was a little like Comptes Rendus in its aim. It would also accept short articles of a more traditional kind.

It never published all that many papers, and in 2007, I think for that reason (but don’t remember for sure), the AMS decided to discontinue it. But Svetlana Katok had put a lot into the journal and managed to find another publisher, the American Institute of Mathematical Sciences, and the editorial board agreed to continue serving. The name of the journal was changed to Electronic Research Announcements in the Mathematical Sciences, and its abbreviation was slightly abbreviated from ERA-AMS to ERA-MS.

In 2016, after 22 years, Svetlana Katok decided to step down, and Boris Hasselblatt took over. It was a good moment to try to revitalize the journal, so measures were taken such as designing a new and better website and making more effort to publicize the journal, in the hope of attracting more submissions (or more precisely, more submissions of a high enough quality that we would want to publish them).

However, despite these measures, the numbers remained fairly low — around ten a year (with quite a bit of variation), and this, indirectly, caused the problem that led to the split. The editors would have liked to see more papers published, but were not worried about it to the point where we would have been prepared to sacrifice quality to achieve it: we were ready to accept that this was, at least for now, a small journal. But AIMS was not so happy. In an effort to remedy (as they saw it) the situation, they appointed a co-editor-in-chief, who in turn appointed a number of new editors, with a more applied focus, with the idea that by broadening the scope of the journal they would increase the number of papers published.

That did not precipitate the resignations, but at that stage most of us did not know that the new editors had been appointed without any consultation even with Boris Hasselblatt. But then AIMS took things a step further. Until that point, the journal had adopted a practice that I strongly approve of, which was for the editor who handled a paper to make a recommendation to the rest of the editorial board, with other editors encouraged to comment on that recommendation. This practice helps to guard against “rogue” editors and against abuse of the system in general. It also helps to maintain consistent standards, and provides a gentle pressure on editors to do their job conscientiously — there’s nothing like knowing that you’re going to have to justify your decision to a bunch of mathematicians.

But suddenly the publishers told us that this system had to change, and that from now on the editorial board would not have the opportunity to vet papers, and would continue to have no say in new editorial appointments. (Various justifications were given for this, including that it would make it harder to recruit editors if they thought they had to make judgments about papers not in their immediate area.) At that point, it was clear that the soul of the journal was about to be destroyed, so over a few days the entire board (from before the start of the changes) resigned, resolving to start afresh with a new name.

That new name is Mathematical Research Reports. We will continue to accept reports on longer work, as well as short articles. In addition we welcome short survey articles. We regard it as the continuation in spirit of ERA-MS. Another unusual feature of this particular split is that the other half, still published by AIMS, has also changed its name and is now called Electronic Research Archive.

If, like me, you are always on the lookout for high-quality “ethical” journals (which I loosely define as free to read, free to publish in, and adopting high standards of editorial practice), then please add Mathematical Research Reports to your list. Have a look at the back catalogue of ERA-MS and ERA-AMS and you will get an idea of our standards. It would be wonderful if the unfortunate events of the last year or so were to be the catalyst that led to the journal finally becoming established in the way that it has deserved to be for a long time.

March 28, 2020

How long should a lockdown-relaxation cycle last?

On page 12 of a document put out by Imperial College London, which has been very widely read and commented on, and which has had a significant influence on UK policy concerning the coronavirus, there is a diagram that shows the possible impact of a strategy of alternating between measures that are serious enough to cause the number of cases to decline, and more relaxed measures that allow it to grow again. They call this adaptive triggering: when the number of cases needing intensive care reaches a certain level per week, the stronger measures are triggered, and when it declines to some other level (the numbers they give are 100 and 50, respectively), they are lifted.

If such a policy were ever to be enacted, a very important question would be how to optimize the choice of the two triggers. I’ve tried to work this out, subject to certain simplifying assumptions (and it’s important to stress right at the outset that these assumptions are questionable, and therefore that any conclusion I come to should be treated with great caution). This post is to show the calculation I did. It leads to slightly counterintuitive results, so part of my reason for posting it publicly is as a sanity check: I know that if I post it here, then any flaws in my reasoning will be quickly picked up. And the contrapositive of that statement is that if the reasoning survives the harsh scrutiny of a typical reader of this blog, then I can feel fairly confident about it. Of course, it may also be that I have failed to model some aspect of the situation that would make a material difference to the conclusions I draw. I would be very interested in criticisms of that kind too. (Indeed, I make some myself in the post.)

Before I get on to what the model is, I would like to make clear that I am not advocating this adaptive-triggering policy. Personally, what I would like to see is something more like what Tomas Pueyo calls The Hammer and the Dance: roughly speaking, you get the cases down to a trickle, and then you stop that trickle turning back into a flood by stamping down hard on local outbreaks using a lot of testing, contact tracing, isolation of potential infected people, etc. (This would need to be combined with other measures such as quarantine for people arriving from more affected countries etc.) But it still seems worth thinking about the adaptive-triggering policy, in case the hammer-and-dance policy doesn’t work (which could be for the simple reason that a government decides not to implement it).

A very basic model.

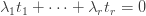

Here was my first attempt at modelling the situation. I make the following assumptions. The numbers  are positive constants.

are positive constants.

Relaxation is triggered when the rate of infection is

.

.Lockdown (or similar) is triggered when the rate of infection is

.

.The rate of infection is of the form

during a relaxation phase.

during a relaxation phase.The rate of infection is of the form

during a lockdown phase.

during a lockdown phase.The rate of “damage” due to infection is

times the infection rate.

times the infection rate.The rate of damage due to lockdown measures is

while those measures are in force.

while those measures are in force.For the moment I am not concerned with how realistic these assumptions are, but just with what their consequences are. What I would like to do is minimize the average damage by choosing  and

and  appropriately.

appropriately.

I may as well give away one of the punchlines straight away, since no calculation is needed to explain it. The time it takes for the infection rate to increase from  to

to  or to decrease from

or to decrease from  to

to  depends only on the ratio

depends only on the ratio  . Therefore, if we divide both

. Therefore, if we divide both  and

and  by 2, we decrease the damage due to the infection and have no effect on the damage due to the lockdown measures. Thus, for any fixed ratio

by 2, we decrease the damage due to the infection and have no effect on the damage due to the lockdown measures. Thus, for any fixed ratio  , it is best to make both

, it is best to make both  and

and  as small as possible.

as small as possible.

This has the counterintuitive consequence that during one of the cycles one would be imposing lockdown measures that were doing far more damage than the damage done by the virus itself. However, I think something like that may actually be correct: unless the triggers are so low that the assumptions of the model completely break down (for example because local containment is, at least for a while, a realistic policy, so national lockdown is pointlessly damaging), there is nothing to be lost, and lives to be gained, by keeping them in the same proportion but decreasing them.

Now let me do the calculation, so that we can think about how to optimize the ratio  for a fixed

for a fixed  .

.

The time taken for the infection rate to increase from  to

to  is

is  , and during that time the number of infections is

, and during that time the number of infections is .

.

By symmetry the number of infections during the lockdown phase is  (just run time backwards). So during a time

(just run time backwards). So during a time  the damage done by infections is

the damage done by infections is  , making the average damage

, making the average damage  . Meanwhile, the average damage done by lockdown measures over the whole cycle is

. Meanwhile, the average damage done by lockdown measures over the whole cycle is  .

.

Note that the lockdown damage doesn’t depend on  and

and  : it just depends on the proportion of time spent in lockdown, which depends only on the ratio between

: it just depends on the proportion of time spent in lockdown, which depends only on the ratio between  and

and  . So from the point of view of optimizing

. So from the point of view of optimizing  and

and  , we can simply forget about the damage caused by the lockdown measures.

, we can simply forget about the damage caused by the lockdown measures.

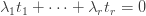

Returning, therefore, to the term  , let us say that

, let us say that  . Then the term simplifies to

. Then the term simplifies to  . This increases with

. This increases with  , which leads to a second counterintuitive conclusion, which is that for fixed

, which leads to a second counterintuitive conclusion, which is that for fixed  ,

,  should be as close as possible to 0. So if, for example,

should be as close as possible to 0. So if, for example,  , which tells us that the lockdown phases have to be twice as long as the relaxation phases, then it would be better to have cycles of two days of lockdown and one of relaxation than cycles of six weeks of lockdown and three weeks of relaxation.

, which tells us that the lockdown phases have to be twice as long as the relaxation phases, then it would be better to have cycles of two days of lockdown and one of relaxation than cycles of six weeks of lockdown and three weeks of relaxation.