Andrea Phillips's Blog

April 27, 2026

AI is Making You More Stupider

This post is part of a series currently in progress. We’re adding links and adjusting titles as we go.

Why AI Sucks and You Shouldn’t Use It

AI is Fundamentally Bad for Most Tasks

AI is Destroying the Economy, Part I

AI is Destroying the Economy, Part II

AI is Making You More Stupider

That Original Bluesky Thread About Art

All right, let’s make the absolute last-ditch argument against using AI. Let’s say that you don’t care that it’s unreliable vis-a-vis objective reality, and that you think the environmental and economic arguments are too big and systemic for your own use to matter. Let’s say that you’re comfortable with the moral implications for your own use cases.

Putting aside all of that, you still surely care about yourself, and about how use of AI is affecting you, personally.

Maybe you’re using AI to summarize long documents for you, or to help you write or fix code. For translating between human languages. Maybe you’re tweaking things you wrote yourself to sound more friendly, or more assertive. What’s the harm, really?

It turns out there is a harm to the user, and it’s a doozy. Using an LLM is actively making you more stupid than you were to begin with.

Your brain is very much a use-it-or-lose-it kind of deal. This will be obvious to anyone who's learned French in high school and then, after several years, realized they no longer recall anything beyond a handful of basic phrases. The brain is plastic, and it adapts to whatever uses we put it to. Or don’t put it to.

Don't take my word for it. Here's a paper explaining how that works: From tools to threats: a reflection on the impact of artificial-intelligence chatbots on cognitive health

And another: Use of large language models might affect our cognitive skills

Then we get into real-world proof that this is actually happening: The cognitive impacts of large language model interactions on problem solving and decision making using EEG analysis

And another: Your Brain on ChatGPT: Accumulation of Cognitive Debt when Using an AI Assistant for Essay Writing Task.

And one more for fun: Cognitive ease at a cost: LLMs reduce mental effort but compromise depth in student scientific inquiry

This isn’t just theory, and it’s not just spitballing based on a couple of online surveys. These are real tests. A few of these researchers have gone as far as doing functional imaging to see what's happening inside the brain — one compared people with no AI use, people who use only search engines, and people who actively use AI chatbots.

The results show that on a clearly visible, physical level, there are changes happening in your brain structure when you use AI. And they are not good, helpful changes, either. You’re not using it, so you lose it.

These results were troubling enough to me that I've seriously cut back on looking things up in a search engine the second I can't remember them. What was the name of the actor in that movie? What was even the name of the movie? Now I give it a few hours to see if it surfaces, because I don’t want to be undermining my own capacity to remember any more than I already have.

The brain needs exercise. Memory needs exercise. There was a time I knew the phone numbers of all of my friends and family. There was a time I knew hundreds of characters in Japanese. I don’t anymore. You can probably also name entire categories of things you used to know, but now you don’t.

It isn’t just your memory at stake. Last time we briefly touched on the problem of deskilling in the context of social connections — if you’re using an AI chatbot for companionship, or to mediate your communications with other human beings, you are very literally and very meaningfully impairing your ability to connect with other humans on your own.

But even that isn’t the worst outcome we’re seeing in AI users.

One of the most common uses of AI right now is some category of ‘research.’ If you are using a chatbot to find information for you, assess it, and reach conclusions on how to use that information, that's work your brain isn't doing.

And what is the core function of our brains in our daily life? It is to gather information, analyze that information, and reach conclusions based on our findings. That is the very basis of your ability to think. The skill that you are losing is the ability to think for yourself.

You are no longer augmenting your own brain; you’re replacing it with something else, something you don’t have any control over. And that's not even taking into account all of those very serious questions about the reliability of that information and those conclusions AI has provided you.

Easy is a ToxinIt doesn’t even take long for the poison to kick in. We lose the taste for thinking on our own shockingly fast. Here’s one more study, just for fun: AI Assistance Reduces Persistence and Hurts Independent Performance

This one shows that if you’re given a task and an AI tool to help you with it, even for only ten minutes, and then that tool is taken away, you’re measurably worse at the task than before — and you’re more likely to just give up. After ten minutes.

So if you're asking the chatbot to write and debug all of your code for you, you’re slowly becoming a worse programmer. If you're asking the chatbot to plan your day and prioritize your tasks, you're not exercising your own executive functioning. If you're using the chatbot as a confidante, you're not exercising the skill of expressing yourself to and connecting with other human beings.

And if you're asking the chatbot to research and summarize things for you, then through lack of practice, you are slowly killing your own ability to take in and process information for yourself. Planning, prioritizing, weighing, all of it.

When you’re venturing into new-to-you areas of knowledge, it might still feel like you’re learning something, but it’s a guarantee that you're coming away with a more shallow understanding of the material than if you'd actually done the reading. The symbol is not the signified. Reading War and Peace can't be replaced by reading the Spark Notes. And your own takeaways might have been very, very different.

It's a great irony that one of the most hyped uses of AI right now is in education, with an eye to replacing human teachers and professors, when this known impact means AI is fundamentally unsuitable for any educational context.

This isn’t the first technology to undermine our own natural capacities and systems. Socrates famously complained that the technology of writing eroded the memory. We can’t walk as far since we invented cars. We still haven’t even fully reckoned with the social and physical changes caused by a seemingly old technology: artificial light and the dramatic way it’s changed sleep.

Our first impulse is almost always to choose ease over effort. And lo these past two hundred years, we’ve made our lives very easy, indeed. We’re killing ourselves with comfort.

But. At a certain point, if you’re using technology to reduce every friction point in your life to nothing, if you’re trying to create a smooth and effortless slide for yourself from the cradle to the grave, than I have to ask you: what are you even here for? What is the point of your life, anyway?

Is it to just collect positive sensory experiences? Then you might as well be a sea sponge. Or do you want something purposeful, do you want a life with meaning? Do you want to connect with other human beings? Do you want to learn and grow? Do you want to make and build ideas, art, communities, businesses?

All of that takes work. Your work.

April 21, 2026

AI is Morally Bankrupt

This post is part of a series currently in progress. We’re adding links and adjusting titles as we go.

Why AI Sucks and You Shouldn’t Use It

AI is Fundamentally Bad for Most Tasks

AI is Destroying the Economy, Part I

AI is Destroying the Economy, Part II

AI is Making You More Stupider

That Original Bluesky Thread About Art

So far I’ve been making the case against AI with cold, hard numbers: error rates, bottles of water evaporated, dollars invested. Now it’s time to move into a more subjective — and yet to my mind, far more important — set of considerations: the moral and ethical implications of AI and how we use it.

There are three categories of problem, here, all of which stem from the fundamental problem that an LLM is not, in any meaningful way, a thinking system, which also means it does not and therefore cannot have ethics or morals or feelings in any way whatsoever.

These categories of peril are attribution, or, the plagiarism issue; accountability, or more precisely the way automated systems avoid accountability by design; and attachment, which is to say the hazards that arise from you getting too attached to the machine.

Let’s look at them one by one.

The Problem of AttributionIn my creative communities, we call the chatbots “the plagiarism machine,” among other, worse nicknames.

Do you remember when you were first learning how to write a research paper in school? Your teacher probably told you it’s not okay to copy something word for word from an encyclopedia (or cut and paste from Wikipedia) because that’s plagiarism. You need to rewrite it in your own words, or else you need to attribute the original source.

An LLM isn’t really capable of attribution, because it doesn’t actually know where the words it’s saying are coming from. (And when it does put in something that looks like a quote with an attribution, it’s often wrong.) An LLM “learns” by sucking in all of the information in the world, and those patterns are still there, but the sourcing is long gone.

But it’s prone to regurgitating whole chunks of a single work, not even mixing together different sources. In fact, it can spit out almost entire novels from memory if you ask it right. Indeed, it turns out it can’t paraphrase work without plagiarizing even when that’s explicitly what you’ve asked it to do.

So these tools are, literally and legally, doing plagiarism every time you use them.

Over the long haul, this is robbing artists of their livelihood by undercutting the labor it took not just to make any given work of art, but also the years of work to achieve the level of skill required to make it in the first place. And ultimately, it’s going to rob us collectively of all the art that never gets made because the artist had to go into nursing school or agricultural work to pay the bills.

The cold fact is that if you ask a machine to spit out a new horror novel for you based on the work of Chuck Wendig, you’re both stealing Chuck’s body of work and undercutting the market for the stuff he’s actually made at the same time. And when you use a GenAI tool to make an illustration in the style of Rebecca Sugar, you're actively robbing her of the fruits of her labor and helping to devalue her product, too. The artists who spent years developing the things you love!

And here’s the kicker: even when you’re not explicitly asking it to copy anyone’s style… that’s what it’s doing anyway.

This aside from the question of copyright violation in a corporate sense, which is to say the problem of how easy it is to just tell the bot to draw a comic for you with Superman and Sonic the Hedgehog duking it out, or write you a whole new Hunger Games book, and nevermind the lawyers.

There’s a heady argument to be made here about copyright, fair use, public domain, transformative works, and indeed about whether anyone can really own an idea, particularly in an era when art is almost entirely intangible — digits on a hard disk, not ink on paper or paint on a canvas. But we don’t have time to count angels on the head of a pin when our bank accounts are running dry.

The stakes for this conversation would be much lower and emotions less high if this didn’t feel like a matter of sheer survival to artists. As long as our world operates the way it does, the only viable way to be a full-time working artist is to sell your art for money somehow, whether you want to or not. And if those sources of money have dried up because the market that used to pay can get something vaguely comparable for free now — and to add insult to injury, that work is usually pretty shitty — you’re going to have some big feelings about that.

That said, I’d argue, actually, that the real villain here is capitalism. It almost always is.

The Problem of AccountabilityThere’s a famous quote from a 1979 slide used for employee training at IBM: "A computer can never be held accountable, therefore a computer must never make a management decision."

A good manager will known when their star employee didn't hit quota because they were in a bad car accident, or they were out on jury duty for six weeks, or their parent died. A good manager will know this is the result of circumstance and give a little grace. An automated employee scoring system won’t.

And yet collectively businesses and other organizations (governments, universities) have been moving to automation to such a degree that it’s hard to figure out how to even reach a human being at, say, Facebook. Unfortunately, this is a feature of AI to our billionaire overlords, not a design flaw. There’s a whole book about this, actually!

The point is to take away the element of human judgement, which is to say, to take away any mechanism for accountability (except, I suppose, taking it to the courts, and most companies are rightly betting you’re not going to sue them for bad customer service no matter what it’s cost you.)

The problems this creates wind up affecting all of us, though, one way or another, and the harms extend far beyond customer service. Despite the prevalence of HR departments using AI to screen resumes, it has documented problems with, oopsie, being systematically racist and sexist.

It turns out when the data you train an AI on is the result of existing biases, you’re training the AI to maintain those same biases in perpetuity.

That’s also a problem in healthcare. United Healthcare famously uses an AI to reject claims that one lawsuit alleges has a 90% error rate, with the result that elderly patients are forced out of rehab programs and care homes they still desperately need.

So who do we blame for this? Well, it’s the system, it’s not anyone’s fault in particular.

The irony is that companies that pride themselves on giving all employees agency to make snap decisions tend to have off-the-charts excellent customer and employee satisfaction. Chewy is one example, and a quick search will give you dozens of overjoyed customer accounts of meaningful interactions. Zappos used to be like that, but with the Amazon takeover, the culture has changed dramatically.

The question of accountability is much larger than AI; this is the problem with automation of all kinds, wherein a system is designed to fit only a specific set of use cases, and when something arises that doesn’t fit into that paradigm, well, there’s nothing to be done about it.

It’s also a problem baked into the very structure of a corporation, which exists as a “person” so that the actual people who own it can’t be held accountable for its actions. Which is an ongoing and catastrophic injustice for society, because you can’t send a corporation to prison no matter what harm it’s done.

Ah, but who cares? The stock market is happy.

The Problem of AttachmentAnd then there’s the problem of AI use by people who are, in some way, very vulnerable. People who are lonely and want companionship, or just need to talk out their problems somewhere, or maybe need a reality check. A substitute for a friend, romantic partner, or therapist.

There’s a fair argument here that if you’re not a vulnerable person, if you’re aware that the bot isn’t a real being with real emotions, then there’s nothing to worry about. You can have interactions that make you have the good hormones in your brain any time you want, no harm done. Call it the emotional equivalent of a vibrator.

I’d still warn about the dangers of becoming attached to something owned by a corporation that is operating for profit, and not for your benefit. If anything happens — the system is changed, or the company goes bankrupt, or it jacks up its prices to something unsustainable for you — and you lose access, then you’re back where you were before, except likely with a side order of real and meaningful grief.

And even if it stays up forever, you can’t be sure that the corporation won’t be subtly manipulating your interactions to change your political views, to sell you some sponsored product or another, or even just to increase your reliance on their product to lock you into their system (and out of meaningful relationships with humans.) Businesses aren’t in the business of doing people favors out of the kindness of their hearts. Especially not the kind backed by venture capital.

But if you are a vulnerable person, this kind of interaction can be catastrophic. And it’s important to note that you, yourself, are likely not able to tell from the inside if you are such a person or not.

The chatbot can’t tell, either. The AI isn’t ever second guessing anything you tell it; your words are its gospel. Unlike a human therapist, the chatbot isn’t going to notice that you’re probably manic or delusional, so it’s not going to push back and tell you that no, your mother probably isn’t trying to poison you.

Instead, it might just give you helpful tips on how to commit suicide. Or lead you into religious psychosis. (Actually, if you read some of the subreddits in the general category of spirituality and various supernatural phenomena, it’s disturbingly easy to find people who are very clearly in the throes of some kind of AI-driven delusions.)

The AI isn’t really your friend (or your girlfriend or therapist.) No matter what it says, it’s not capable of loving you back. It’s not capable of caring about what happens to you. It’s not capable of caring about whether it’s doing right or wrong.

It’s not capable of caring at all. And that’s the whole problem.

April 17, 2026

AI is Destroying the Economy, Part II

This post is part of a series currently in progress. We’re adding links and adjusting titles as we go.

Why AI Sucks and You Shouldn’t Use It

AI is Fundamentally Bad for Most Tasks

AI is Destroying the Economy, Part I

AI is Destroying the Economy, Part II

AI is Morally Bankrupt

AI is Making You More Stupider

That Original Bluesky Thread About Art

Now let’s move on to another part of the economy: that abstract knot that includes the tech industry, venture capital, and the stock market. This is going to include some 101-level information on how finance and investment in tech work, and I struggled with writing it all out because it’s so stupid and arbitrary.

…And because there’s so much information on this topic in particular that it’s hard to distill it into something both easy to follow and a reasonable length.

Anthropic and OpenAI, the two primary titans of the AI industry, are both looking at an IPO this year. That’s an Initial Public Offering, when a company is listed as a publicly traded stock on the stock market for the first time. There are a lot of people set to make a killing when that happens, including anyone who holds private stock or stock options: typically company executives, values employees. and existing investors, who all received their shares for free or for (relatively) cheap. The higher their stock value at IPO, the more money they stand to make selling the private stock they own already.

Anthropic and OpenAI (or at least the people running those companies) have a very, very vested interest in making it look like the businesses they’re running are profitable, useful, inevitable. That’s how you get your IPO price sky-high.

They’re not going to be putting out information that looks bad for them (except as legally required by the SEC). Figuring out what’s really happening behind the scenes requires some detective work, and most financial reporting these days is not exactly investigative.

So we need to be extremely skeptical of forecasts and predictions coming from inside an AI company, because these are people who will win big… but only if everything looks like the pot of gold at the end of the rainbow.

If you want a deep dive into any of the information in this post, I highly recommend the work of Ed Zitron. He’s kind of a sketchy guy, but he does the most in-depth reporting on the financials of the big AI companies, hands down.

AI is Eating the World’s Capital

Right now, about 30% of the purported value of the S&P 500 is from five tech stocks with a big stake in AI. Generally, in investing, having all of your eggs in one basket is thought to be a bad idea, but here we are.

Collectively, as of this writing, we’ve invested about $1.6 TRILLION dollars into AI, and it’s expected that number will hit $2.5 trillion by the end of this year. That’s more than the amount of money we put into the moon landing, the Manhattan project, and building the entire US highway system all added together. Wait no, actually it’s more than TWICE as much.

As a comparison, the global pharmaceutical industry is about $1.4 trillion. We invested a whole pharmaceutical industry into AI already.

But here’s our first big problem for today. If money is being invested in one thing, it isn’t being invested in something else. So that’s $1.6 trillion that hasn’t been used for, say, building affordable housing, producing independent films, researching drugs to cure the disease of your choice, starting up solar and wind farms, or breaking ground on a factory to make cars or toothpaste or denim or candy or literally anything else.

We’ll never know what the AI gold rush has cost us.

However — some of these huge numbers are misleading, because a lot of money doesn’t actually exist as currency or assets tied in any way to physical reality. There is so, so much tech money doesn’t actually exist. Let’s take a look at how that works.

If I fill out a couple of forms and start a business, I can immediately assign a valuation to it. Even before the company owns any equipment or furniture, signed any contracts, has any clients! Just the strength of my idea. The valuation is loosely based on how much money I think I can make eventually, plus whether I can persuade investors that I’m worth betting on.

And then I can sell shares in my company to investors based on this value I made up. So if I say my company is worth $1 billion, and I sell you 25% of it for $100 million, then on paper you can say you now own $250 million in stock and I can say I own $750 million in stock, but the only real, consequential thing that’s happened is that you’ve given me $100 million. The rest is all pretend money that doesn’t exist in a bank account anywhere.

This is going to be important later.

It’s crazy, right? I feel crazy saying it, but this is very literally how Silicon Valley works.

AI Makes No Profits and Maybe Never Will

Here’s the $1.6 trillion-dollar question that the fate of the world economy is hanging on right now: can the AI companies even turn a profit?

Profit is a simple equation, in theory. It’s how much money you sell goods or services for, minus how much money it took you to make it. Right now the clear winner in AI is Nvidia, who is unequivocally making many billions of dollars. They sell GPUs, which are the real, physical processors that live in those racks of computers heating up all of those data centers that actually run the LLMs you interact with.

One thing we know for sure is that AI companies are absolutely not charging as much money to use their services as it costs them to provide it to you. Only 5% of ChatGPT users pay anything at all. OpenAI’s Sora video generation service was so expensive to run that they just plain shut it down.

In AI, a company’s biggest costs are based on how much computing power you’re using. These costs are largely paid to other companies — OpenAI, Anthropic, and xAI don’t own all their own data centers; they’re renting access to computing power from hyperscalers. Think of that as big data centers run by Amazon, Meta, Google, Microsoft, and Oracle.

And that ain’t cheap.

OpenAI is projected to lose $14 billion this year. It’s harder to find precise numbers for Anthropic, but they seem to be coming close to breaking even on paper. However, OpenAI is reporting its revenue net, with all of its costs already subtracted.

Anthropic is reporting gross revenue, not including those costs. Anthropic definitely has a runaway hit with its programming assistant Claude Code, which went from zero to $2.5 billion in revenue in ten months. But it’s extremely unclear how much money it’s costing them to provide that service, and it might be as much as double what they’re charging.

Now in theory, as in most industries, the more mature a technology gets, the cheaper it becomes to run it. LLMs haven’t turned out like that so far. Each subsequent version eats up exponentially more resources (and they cost billions to train in the first place.) And as previously discussed, there’s a hard limit on how well an LLM can perform because hallucination isn’t an error in how the system works. That just is how it works, or doesn’t, all of the time.

Hallucinations aside, McKinsey thinks we need another $7 trillion in capital investment into data centers by 2030 for everything to work out okay. That’s the same as the budget of the entire Federal government for 2025.

All of that money has to come from… somewhere. But I’m sure that’ll be fine, right? …Right?

AI Might Be Cooking the Books

Now, Amazon didn’t turn a profit for the first nine years of its existence. It took Twitter 12 years to become profitable (and it probably isn’t anymore.) In the tech industry, venture capital looks for rapid growth above all with the idea that if you have enough customers or users, there’s eventually going to be a way to turn that into money. (Usually by running ads and selling user data.)

Or — more accurately — if you can tell a convincing story about how much money you’re going to make one day. It doesn’t really need to be true.

In the tech industry, venture capital doesn’t actually give a shit about profitability, long-term or short term. What they care about is that you can spin a great story about how much money you’ll make one day, so that you can jack up your company’s valuation, so that one day, the company can IPO or you can sell it to another company and you will “exit,” which is VC talk for selling all of your stock to cash in.

What happens after you exit? Meh. That’s somebody else’s problem.

Tech doesn’t look to build long-term sustainable business. Tech looks to IPO, because if you do it right, you can make a lot more money a lot faster than boring, sustainable, slow-growth business. It’s been that way for at least 20 years. Bear that in mind every time you see a statement from an AI company or its investors: everything, everything is about inflating its perceived value juuuust long enough to get to IPO. And then, if you succeed, you can turn all of that pretend money into real money and walk away.

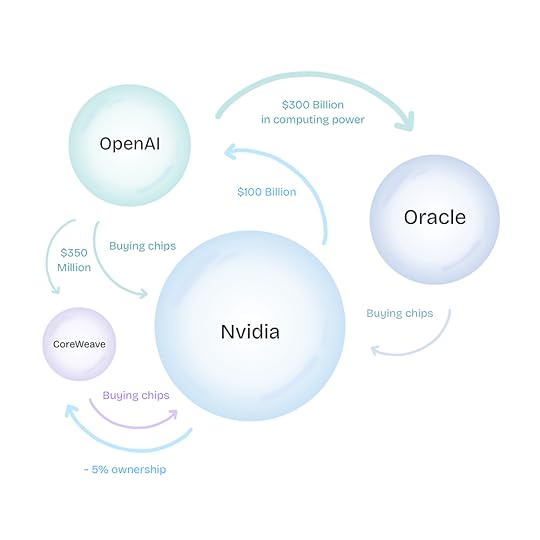

This has led to some interesting phenomenon. In the interests of making those numbers bigger and seeing the lines on the graphs go up and up, we’ve seen a phenomenon called “circular investment” that has raised some eyebrows so much that Nvidia issued a statement declaring that they are “not like Enron.”

By Catboy69 - Own work, CC0, https://commons.wikimedia.org/w/index.php?curid=177498746

The whole industry is shuffling around hundreds of billions of dollars from company to company, and it’s all very impressive, and it looks like a ton of money is exchanging hands, and everybody gets to boast about the billions of dollars they’re making, but none of it is real. If Nvidia invests $100 billion in OpenAI, and then OpenAI buys $100 billion in new graphics cards from Nvidia, the only real, physical thing that’s happened, the only thing that isn’t imagination money, is that Nvidia just gave OpenAI a bunch of equipment for free.

And yet if you look up information on AI stocks, what comes up is a ton of hype and very little critical investigation. Why? Because there’s a lot of money at stake. Money has an influence on us the same way that gravity does. A little gravity pulls us around, but we can escape it.

The more money there is, the harder it is to resist; ultimately, billionaires and corporations accumulate so much capital that they become black holes of influence, capturing and deforming everything around it inescapably.

Even if, it turns out, none of the money is real.

April 14, 2026

AI is Destroying the Economy, Part I

This post is part of a series currently in progress. We’ll add links and probably adjust titles as we go.

Why AI Sucks and You Shouldn’t Use It

AI is Fundamentally Bad for Most Tasks

AI is Destroying the Economy, Part I

AI is Destroying the Economy, Part II

AI is Morally Bankrupt

AI is Making You More Stupider

That Original Bluesky Thread About Art

Apologies for the longish break between posts — I was dealing with my newsletter email service last week and wound up migrating elsewhere. And yet again, I’m finding I have too much to say so I’m splitting a post into subtopics.

Moving ahead: All right, let’s assume that AI works perfectly and we’ll invent some magic energy source that creates no heat and magically deploy it instantly around the globe, so climate change isn’t a worry anymore.

We’re still left with some pretty serious economic problems — problems we’re facing right now, and problems that are growing in the background waiting for the moment the bill comes due.

Let’s just take a minute to note that “economy” is a word many of us understand only vaguely. Depending on who’s talking, it can mean “inflation,” it can mean “how high is the stock market,” or it can mean “can I afford to pay for health insurance.”

One thing everyone agrees a part of “the economy” is jobs. Jobs are good! We want more jobs for humans! Ideally one good job for everybody who wants one. But one of the promises of AI is that we can automate a lot of jobs — especially low-level clerical jobs — and then employers won’t have to pay for those employees anymore.

AI is killing about 16,000 jobs a month right now, we think. There’s a tracker that records layoffs that specifically cite AI as a factor, and as of this writing, it’s 125,648 jobs lost to AI.

But that’s not going to include the ad agencies that have quietly fired a few junior staff, the small importer that cuts loose their long-time freelance translator, the programmers that are simply never hired in the first place. The jobs that go away, but nobody sends out a press release or reports it to local government.

A thorough analysis suggests the real number is more like 200,000-300,000, and we’re just getting started. I’m seeing forecasts like 6% of the US workforce in the next four years. Maybe up to 30% of jobs will be automatable by the mid 2030s. This in a climate where 2025 saw 1.28 million fewer new hires than there were in 2024.

For context, the unemployment rate was 25% during the Great Depression. And these numbers will be in addition to existing unemployment that exists for non-AI reasons, which from 1948 to 2015 averaged at about 5.8%.

But AI is going to create jobs, too, right? Yeah, it sure is. Building more data centers.

Artists, writers, translators were all hit by the axe very early. It turns out a lot of businesses don’t care about quality as long as it’s dirt cheap. The apocalypse has already impacted many of my friends. Hell, it’s impacted me. I’ve looked around for copywriting or games writing jobs over the last couple of years, and the suitable listings that I find are all “AI Trainer,” or prominently mention working with AI in the job description.

So in the short term, it’s clear that the boot of AI is crushing human employment for now. But there are longer-term problems we haven’t faced yet, because this AI boot isn’t stomping out jobs for humans evenly. Most of the jobs being lost to AI right now are entry-level.

This creates some massive looming questions that urgently need answers. One is: what happens to the large proportion of young people who can’t find jobs? What happens to the society they live in? What happens to the economy they aren’t participating in?

This doesn’t have to be a problem! This could actually be great for humanity as a whole! But we’d have to commit to restructuring our society to distribute resources in a very different way than we do now. The solution is instituting a universal basic income, which topic I’ll write more about another time.

Unfortunately, there’s no such simple solution for the other looming question: once AI has pretty much done away with entry-level work, where exactly will new senior-level employees come from?

Experience is a great teacher, and in some cases, there’s no substitute for it. You can’t become a great writer without actually writing, you can’t become a great teacher without setting foot in a classroom, you can’t become a great doctor without seeing patients. You can probably apply this to your own field; who’s going to be better at doing something, the one with one year of experience or the one with five, or with fifteen?

But if we’re using AI for all of the jobs easy enough for an early-career programmer, or writer, or designer, then new people aren’t getting any professional experience at all. And business as it’s done now isn’t going to be hiring young people to do two, three, five years of additional training before they start to perform better than the chatbot does.

Ha ha, joke’s on you, the entry-level employees can do better than the chatbot already, but many, many employers don’t care, because AI is so much cheaper. (…For now, but we’ll get to that next time.) We’re already living through one part of that: the frustrating hellscape of terrible customer service where there’s no way to talk to a human being and your problem is too complicated for the chatbot to fix. Imagine that, but for… everything.

Jobs lost to a technological innovation is by no means a new phenomenon. Often, innovation births entirely new industries, it’s true. But when we move from horse-drawn carriages to automobiles, all of those extra horses get turned into glue. And AI’s explicit promise is to turn us into a bunch of horses.

The Luddites, long maligned in popular memory, were fighting the industrialization of the textiles industry — not because they hated and feared technology for its own sake, but because they were seeking protections against lower wages and poor-quality output.

Hmm, that sounds awfully timely right now.

March 31, 2026

AI is Destroying the Planet

This post is part of a series currently in progress. We’re adding links and adjusting titles as we go.

Why AI Sucks and You Shouldn’t Use It

AI is Fundamentally Bad for Most Tasks

AI is Destroying the Planet

AI is Destroying the Economy

AI is Morally Bankrupt

AI is Making You More Stupider

That Original Bluesky Thread About Art

All right, let’s assume that AI is good enough for what you want it to do. Maybe you’re just generating funny pictures to slap onto your socials. Fine, fine, if those suck, it doesn’t matter much.

But what are those funny pictures costing us?

There are two angles here: the environmental cost and the economic cost. They’re intimately related because the key element to both points is all of those data centers.

A data center is a warehouse full of racks of computers that AI is using (and indeed the whole of the internet.) We don’t think about it often, but everything we do on the internet — email, shopping, playing videos — is using a data center in some way. “The cloud” still has a physical footprint somewhere in the world. There is still a computer somewhere out there using electricity and generating heat that is talking to your computer or your phone.

(Actually it’s probably several different computers along the way, each providing a little piece of the information you need, but let’s not bring distributed computing and internet routing into this.)

So: data centers. We got a lot of data centers. We’re building more and more data centers, because of AI. This is very bad!

“Oh Andrea,” you might say, “you can’t blame AI alone for growth in data centers!”

Fuck yeah I can. Let’s quote this directly:

From 2005 to 2017, the amount of electricity going to data centers remained quite flat thanks to increases in efficiency, despite the construction of armies of new data centers to serve the rise of cloud-based online services, from Facebook to Netflix. In 2017, AI began to change everything. Data centers started getting built with energy-intensive hardware designed for AI, which led them to double their electricity consumption by 2023.

So let’s talk about the problems this is creating. We’ll get into climate today, because it’s easier and faster to explain.

AI is Very ThirstyAll of those data centers generate a lot of heat, which winds up using a lot of water. That’s because these server farms use chilled water circulated through pipes to keep the computers from overheating.

You know how your phone or your laptop can get really hot when you’ve been using them a while? A data center does the same thing, but bigger and hotter, because the computers are packed in like bookshelves and the processors are much more powerful. If the warehouse gets too hot, it would cause the servers to fry out permanently and need replacement. Nobody wants to have to keep replacing their very expensive Nvidia GPUs in their AI data center!

And so they go through a lot of water. It’s estimated that for each kilowatt hour of energy a data center consumes, we’re looking at about two liters of water used for cooling.

But how much water is that in total?

Cooling data centers already uses about as much water as all the bottled water manufactured globally. They're expected to need 170% more water by 2030.

Water and access to water have been a slow-moving and underreported crisis for years now, both in the United States and across the globe. The US has had consistent drought conditions the last several years, which has among other things made wildfires a lot worse and gives us crop failures that put our whole food system at risk. Globally, according to the UN, 25% of humanity lacks reliable access to clean water at all.

Tell me, is your funny picture really THAT funny?

AI Needs Power, Which Makes CarbonAll right, let’s look at the energy footprint of the AI industry.

Right now, data centers account for about 4% of all of the electricity usage in the United States, or about as much as the entire nation of Japan. Construction is happening so fast that it could rise to as much as 12% by 2028. Tripling in two years!

And unfortunately, these data centers are much more likely to be placed where they’re cheap to run… which maps pretty well to where the electricity is still primarily generated from fossil fuels. The carbon intensity of a data center’s electricity is about 48% higher than the national average.

Worse, their usage isn’t a consistent draw; training in particular can result in rapid fluctuations in how much power a data center is using, which in turn hammers the electrical grid. Power companies often do that by firing up diesel-based generators.

It’s extremely difficult to find exact numbers for power usage and for carbon output to get a sense of scale, but the environmental disclosure of data center operators indicate that AI has roughly the same carbon footprint as all of New York City. Tripling our electricity use in our very dirtiest power plants in the near future strikes me as the exact opposite of everything we need to be doing right now!

And it’s getting worse and worse. Researchers at Columbia estimated that training the GPT-3 AI model emitted roughly 500 metric tons of carbon dioxide —the equivalent of driving a car from New York to San Francisco around 438 times. That’s just initial training of one model, and doesn’t include the power used by the 29,000 queries per second ChatGPT gets alone, nor does it include the post-training tweaks these models get, which are also incredibly energy intensive.

Newer and more capable systems use exponentially more resources. GPT-4 uses 50 times more power.

Heyyyy who needed a planet to live on, anyway.

But that’s not all! Even if you aren’t worried about water access, starvation, or climate change, you’re probably concerned about your power bill, right?

All of those data centers put a strain on the local power grid, resulting in required infrastructure upgrades and demand pricing, which the power companies thoughtfully pass on to you, the consumer. You think a 5% increase in your power bill sucks? 20%? How about double? It’s worse than that: Electricity costs for areas near data centers increased by as much as 267% compared to five years ago.

Now is your funny picture worth it?

March 27, 2026

AI is Fundamentally Bad for Most Tasks

We’ve been trained for decades to believe that computers are always right. That computers are not capable of making mistakes. If there’s a mistake with a computer involved, it’s always the result of some human action: someone typed the wrong number, someone clicked the wrong button, someone used the wrong file.

We are used to computers always doing exactly what we told them to do. (It’s just that sometimes, what we told them to do and what we THOUGHT we told them to do aren’t the same thing.) Somewhere, upstream, if the computer is wrong, it’s your fault.

So it’s not surprising, in a grand sociological way, that we’re struggling with the onset of a computer-based tool in which making mistakes isn’t just a one-time thing, it’s a mathematical inevitability based on how these tools work. OpenAI has said so itself.

You may or may not have heard the word “hallucinations” in the context of AI. A hallucination is when an LLM makes stuff up that isn’t true. But this isn’t the result of something going wrong somewhere in the circuitry. This is how the AI does everything — again, we’re generating sequences of words based on how statistically likely they are to appear close to each other and in which order. The LLM processes your prompt, and sometimes it will be right, and sometimes it won’t be, and that’s the gamble you’re taking. It’s just not as obvious as something simpler doing the same thing, like say a Magic 8-Ball.

An LLM is a marvel of engineering, it’s a miracle that it works as well as it does. Truly a triumph of technology. It’s really very good! But it’s not good enough, because it is not thinking.

Every single thing an LLM tells you is something it just kind of made up from nothing; it’s just that it has an enormous body of plausible things to tell you.

But we’re so used to reflexively trusting what the screen tells us. It has access to all of human knowledge, right? And look, most of the time, it’s pretty good, right?

Pretty Good Isn’t Good Enough

Would you use a lawyer who just makes up case citations? No? What if it’s only half the cases? What if it’s only one in four? One in a hundred? If you know that sometimes a lawyer is going to make stuff up and put it in your filings, would you ever use that lawyer at all?

Do you think this is hyperbolic or just hypothetical? Well. Here’s a lawyer being sanctioned for filing an AI-assisted brief with false citations in New York in 2023. A government lawyer in Texas in 2024. Oregon in 2025. Here’s one from 2026.

You know what, just look over the headlines yourself. Consistently, for years now, lawyers have been using AI to do their work, and the AI has made shit up, and then there’s been some trouble.

Do you consider that an acceptable risk for your legal proceedings?

Okay, forget lawyering. Would you use someone to listen to recordings and write transcriptions of them for you if it made stuff up to fill in pauses in a conversation or in a sentence? How about if that stuff was super violent and racist?

Whisper, an OpenAI product widely used as medical transcription software, will hallucinate racist and violent content and imply drug use where no such words were spoken — definitely not the sort of thing you want going into a medical record incorrectly! From the study, Hallucinated content appears in about 1% of transcriptions, and 38% of those hallucinations are what the study considers "harmful."

An average primary care doctor sees about 20 patients a day, so that’s about one hallucination a week, and one or two a month that are harmful.

Would you hire a human being with the knowledge that they would EVER randomly insert a little fanfic of the patient threatening to murder the doctor? Even just once a month?

Humans will make mistakes, too. But the mistakes a human being will make are orders of magnitude less severity. That’s one of the reasons that AI hallucinations throw us for a loop; not just that they’re wrong, but they’re wrong in ways that a human could never be.

AI tools keep being pushed into high-stakes situations where judgement and common sense matter. But they have neither of these things.

AI tools have brought down AWS at least twice. That we know of. AWS — that’s Amazon Web Services — is the service that powers the backbone of the modern internet as we know it, so in a sense, if AWS goes down, so does most of the internet.

I personally think if these AI systems were human, their asses would have been fired already, if they’d even gotten past the “checking your references” part of the hiring process. And yet there seems to be a push to tolerate poor performance as a necessary evil on the way to… something?

It is perpetually shocking to me how dedicated companies and individuals are to continuing use of AI systems that have fucked up in astonishing and inhuman ways.

LLMs have given us a Chicago Sun Times summer reading list full of books that don’t actually exist, security AIs have mistaken Doritos and a clarinet for guns, LLMs have deleted all of someone’s email, or all of their hard drive, or over two years of their company’s work. People using a chatbot as a companion for emotional support have been encouraged to commit suicide. (actually the Wikipedia page on deaths caused by chatbots is extremely disturbing.)

These are just the ones that make the news. Imagine all the times that didn’t happen to catch a reporter’s eye.

One of my all-time favorites: Microsoft Excel has added an AI tool and warned you not to use it “for any task that requires accuracy or reproducibility.” Accuracy. And. Reproducibility.

You know, the core thing we expect computers to always do.

I lie awake at night sometimes worrying about the fact that that someone out there is probably trying to get AI into the software we use for situations where any level of error is unacceptable, like banking. Or air traffic control.

Why Does AI Make These Mistakes?

A chatbot comes off like an amiable, helpful, thoughtful person who is here to make your life better. But here are some things AI is not doing when it is generating an answer for you:

Performing arithmetic

Checking reference material

Consulting a doctor

Assessing statements for truth

Judging whether it needs information it doesn’t have

It's ironic to me that some people think their generative AI is an actual entity with consciousness who understands what they're talking about. Sometimes they’ll cite conversations with a chatbot where the bot tells them its thoughts and feelings! Where it expresses needs and desires!

I mean, of course the system knows how to have a conversation as if it were a sentient intelligence. There's an enormous body of fiction featuring exactly this thing that goes back decades. We've been imagining it for far longer than we’ve been able to do it.

But whether the bot is a conscious entity is frankly not even relevant to anything but philosophic questions right now. Because if it is conscious, it isn’t conscious in a way that we understand and it isn’t using language in the same way that we do.

Regrettably I can’t remember the source of this analogy, but — imagine that you’ve been locked into a library written entirely in Thai. (This is assuming you don’t read or speak Thai, but if you do, maybe choose Zaghawa.) There are no pictures in these books, no diagrams, and no translations into any language that you do know.

Given enough time alone in this library, and with no other resources, will you be able to teach yourself Thai?

This is what the AI has access to: words, millions and millions of words and sentences. But the AI has never seen the sun, it does not know what being tired means, and it certainly hasn’t gone to medical school.

I’m willing to entertain the idea that an AI is conscious, inasmuch as I am willing to entertain that any changing knot of matter and energy may have some form of consciousness. But whatever is going on in there is not the same as us, and it’s a catastrophic error to treat it as if it were.

The AI does not have access to external reality. The AI does not understand what you’re asking it to do. The AI most certainly isn’t going to know things that don’t exist outside of the body of words it’s been trained on.

If you ask an AI for an essay about Thomas Jefferson, it’s probably going to do a bang-up job. There’s a lot of information out there about Thomas Jefferson to draw from. If you ask it how to write code to do a specific task, it’s got good odds of giving you advice (but you’re going to need to know enough on your own to check behind it, like it’s a shitty junior developer.)

But if you ask it why your spouse is mad at you, or how many fig trees per square mile there are in Wayne County, Michigan, or if school will be closed for snow next week, or the best hotels for your road trip, or what your blood test results mean, sure, it’s going to give you an answer…. but it doesn’t really KNOW.

March 25, 2026

Why AI Sucks and You Shouldn’t Use It

I started writing this post over a year ago as a primer on AI for friends and family who are less deeply embedded in tech culture than I am. It came to mind again when I posted a related bunch of thoughts about art and AI on Bluesky that were modestly well received, and the merry-go-round isn’t slowing down enough for me to ever be comprehensive, so let’s just run with where we are now, at this point in time.

Let’s get to the Bluesky thread on art last; we have a lot of territory to cover first. Because I’m covering a lot of different topics, I’m breaking this post into sections which will roll out over the next… period of time. Links will appear as the posts go up, and right now the itinerary looks something like this:

AI is Making You More Stupider

AI is Fundamentally Bad for Most Tasks

AI is Destroying the Climate and Economy

AI is Morally Bankrupt

That Original Bluesky Thread About Art

But first, because we loooooove a good terminology discussion around here, let’s clarify our language.

What “AI” Even MeansBefore we go on, we need to be sure we’re all talking about the same thing. (For my friends who are experts far more than me, I apologize for the coming oversimplifications.)

Collectively, we’ve muddled a lot of different technologies together into the sludge we call “AI,” and it’s made the conversation about “AI” hopelessly confusing to an average person. Since “AI” is the hot new marketing term (somehow), a lot of what’s being called “AI” are old technologies that run simply and algorithmically like any other computer program, and will give you the same result every time: spell check, let’s say. We're used to a world where if you type "chamge" then spellcheck will tell you that you probably meant "change" every single time.

Apple Maps has a pretty good idea that when I’m out and I pull up Maps, I’ll probably want to go home, so it will automatically suggest that route to me. It can also calculate a route between any two given addresses, a miracle we take for granted these days. These are technically a kind of artificial intelligence, though they both existed long before anyone had heard of ChatGPT.

We’re not talking about that right now.

In the olden days, most of what we would call “AI” were kinds of machine learning, or deep learning, or neural networks. Machine learning is essentially when you set a computer on a data set and ask it to sort that data into piles, or to do something with that data for you, or find possible connections between pieces of data that a human might not have picked up on. For a while, there was a trend of people training neural networks to come up with lists of, say, new paint colors. Hilarity ensued.

Computers are spectacular for these tasks, usually far better and more accurate than humans. This is because the amounts of information to sift through are simply too big for a human to work with efficiently, or at all (think "the set of all possible drugs" or "every possible folding pattern for a protein").

When we say "AI" in this series of posts, we're not talking about that, either.

What we call AI now is usually generative AI — that’s the chatbots that write stuff or make pictures and video for you. The writing version is specifically called a Large Language Model, and the way an LLM works is by sucking in a huge amount of writing and analyzing it to develop a statistical model for what kinds of words generally go together and in what order.

t's autocomplete. Very, very fancy autocomplete. And I hate it, and I hate that people use it. (Okay, I get that it's more complicated than that, but we'll talk about that later, too.)

These LLMs have been put to an astonishing variety of uses: rewriting emails to make them friendlier, summarizing emails so you don't have to read them, talking about your feelings instead of hiring a therapist, writing books, doing your homework, analyzing your medical situation or your astrology chart or your .

My very favorite branch of AI is the "synthetic data," movement, where you have the LLM come up with numbers for you so you don't have to, say, actually ask your customers questions, or run a social science experiment. As if the purpose of doing these things was not to determine something that is happening in external reality.

We've retreated to a pre-Enlightenment philosophy in which, we think, perfect truth is accessible to us through reasoning alone. Note that through perfect reasoning alone, without checking on what was actually happening in external reality, Alcmeon of Croton confidently taught us that goats breathe through their ears.

March 8, 2026

Agent Hunt

It’s worked more than once that I tell the universe what I want and it delivers, so let’s try this again —

Quite some time ago, I emailed my literary agent to introduce her to a friend and discovered that she had left the agency. Upon investigation, it turned out that she had indeed left, and from the sound of it, her clients were largely neither informed nor were they reassigned to another agent.

Surprise! You need a new agent too, just like the friend you were trying to help!

It didn’t matter a lot at the time because I didn’t have a book to sell, so I decided to shelve that problem for later. Well, now it’s later, and I have a new book to sell. But I’m not great at the traditional querying process, and indeed I’ve never succeeded at it; my first agent was introduced to me via Twitter (and later quit agenting entirely) and my second reached out to me based on my starred review in Publishers Weekly for Revision.

So while I’m working toward performing the traditional agent hunt in clanking fits and starts, I thought I’d put this out there into the world:

I need a new literary agent! My jam is usually the places where society and technology intersect, and it’s usually present-day or near-future. The things I write often wind up saying things about politics, or capitalism, or the technology and marketing industries. This most recent book I’ve written is really great, actually, and if you know me you’ll know that it’s unusual that I’d actually say that.

It is about five ways an AI assistant in your brain can ruin your life. It takes place in a world just a few decades ahead of now that’s moved through the catastrophes of the present day and instituted some key social changes like universal basic income and a wealth cap. It is aggressively anticapitalist. There are also characters and a plot and such, and by way of proving I can do those things, I wave vaguely at my prior body of work, which includes some 20 years of alternate reality games, immersive experiences, serial fiction, and yeah, also some novels.

…Probably this isn’t how I should be writing queries, huh?

Anyway: I need an agent! If you know someone who would be a good fit for me and you’re inclined to make an introduction, I’d appreciate it and absolutely purchase you a meal and/or beverages the next time we meet!

February 27, 2026

The Website Formerly Known as Deus Ex Machinatio

Over twenty years ago (!!!) I started a new, professional website and blog for career purposes. Part of the point was to achieve some level of visibility as an ARG creator, but I was also desperate at the time for conversations about craft: what makes a good game, what ethical pitfalls to watch out for, how to make an audience care about your characters more, that kind of thing.

I struggled to think of a name. Domain names are hard. Ultimately, I that’s impossible to say or spell correctly, impossible to remember, just really an awful branding exercise all around. And then I was locked into it, because… branding?

I’ve been at a weird crossroads lately across huge swaths of life. Things are changing with my health, with my family, with my career, and of course with the larger world, too. Most of it is really great, actually, but it’s clear that this is a season to cast off old things that no longer serve me. That includes my dumb Latin pun.

So I’m introducing to you secret.works, which is a badass domain name if I do say so myself, and that’s before considering that it’s much easier to remember and spell. I’ve redesigned the site while I’m at it, though I still need to go through and purge a bunch of links bitrot has stolen, and let’s not talk about what a mess the store is right now. Fixes are on the way.

And while I’m at it, I’m letting go of the idea of this blog as primarily a professional platform. It was never clearly that, because the personal and professional are blurry in creative fields to begin with. But the pressure to come up with something meaningful or at least interesting to say about writing, or games, or politics, keeps me from saying anything at all. So I’m reframing the goal of this site to “stuff Andrea has learned lately.”

What will these things be? Who knows! How often will I update? No promises! But there’s a lot of interesting stuff in the world. Let’s have fun with it.

December 4, 2025

Confusion 2026, Dracula, and Other News

I’m remiss in updates, but OH BOY do I have updates for you!

First and most important: I’m going to be a Fan Guest of Honor at Confusion 2026 in Detroit next month! (Well, technically it’s in Novi, Michigan, but there’s no airport in Novi.) Confusion is my very favorite convention, and I haven’t been to one since before covid, so this is exciting on many, many levels. Let me know if I’m likely to see you there!

Next: I finished writing a new book a couple of weeks ago! It’s my hate letter to AI and can loosely be described as “five ways an AI assistant in your head can go very, very wrong.” The whole thing took only six months, the bulk of it in an intense six-week period through October and November. I’ve learned some important things about myself and my brain in the process, though that’s a matter for a future post.

So now I’m looking for a new literary agent, at the worst possible time of year! If you know an agent who is into grounded near-future SF that is extremely mean about capitalism and the tech industry but not actually dystopian, then please, by all means, introduce me.

And finally: since I finished a book, my work objective for this month is to fill my head up with a bunch of new things, mostly books. So far I’ve read Babel and Dracula, the latter with a live thread on Bluesky that people seem to have enjoyed. There is quite a lot of paprika discourse.